From Leaves to Leads: How AI Decodes Plant Functional Traits for Next-Gen Drug Discovery

This article explores the transformative role of Artificial Intelligence (AI) in quantifying and analyzing plant functional traits—the biochemical, physiological, and structural characteristics that define ecological strategy and pharmaceutical potential.

From Leaves to Leads: How AI Decodes Plant Functional Traits for Next-Gen Drug Discovery

Abstract

This article explores the transformative role of Artificial Intelligence (AI) in quantifying and analyzing plant functional traits—the biochemical, physiological, and structural characteristics that define ecological strategy and pharmaceutical potential. Targeting researchers, scientists, and drug development professionals, we provide a comprehensive framework spanning foundational concepts to practical applications. We examine core AI methodologies like computer vision and deep learning for trait extraction, address challenges in data standardization and model interpretability, and critically evaluate AI performance against traditional methods. The synthesis highlights how AI-driven plant phenomics accelerates the identification of bioactive compounds, informs sustainable sourcing, and opens new frontiers in biomimetic and phytochemical research for biomedical innovation.

What Are Plant Functional Traits? The AI-Ready Primer for Biomedical Researchers

Plant functional traits are measurable morphological, physiological, and phenological characteristics that influence a plant's fitness, performance, and ecological role. In the context of a broader thesis on AI for understanding plant functional traits, this guide provides a technical foundation for researchers. AI and machine learning models require standardized, high-fidelity trait data for tasks such as species classification, ecological forecasting, and the identification of novel bioactive compounds for drug development. This whitepaper details core traits, measurement protocols, and data structures essential for building robust predictive models.

Core Plant Functional Traits: Definitions and Quantitative Ranges

Table 1: Core Morpho-Physiological Traits

| Trait Category | Specific Trait | Typical Units | Ecological/Functional Significance | Representative Range (Across Species) |

|---|---|---|---|---|

| Photosynthetic | Maximum Photosynthetic Rate (A_max) | μmol CO₂ m⁻² s⁻¹ | Carbon gain, primary productivity | 5 - 30 |

| Light Saturation Point (LSP) | μmol photons m⁻² s⁻¹ | Adaptation to light environment | 200 - 2000 | |

| Stomatal Conductance (g_s) | mol H₂O m⁻² s⁻¹ | Water use efficiency, transpiration | 0.05 - 1.0 | |

| Structural/Leaf Economic | Specific Leaf Area (SLA) | m² kg⁻¹ | Growth rate, resource investment | 5 - 40 |

| Leaf Dry Matter Content (LDMC) | mg g⁻¹ | Toughness, longevity, defense | 100 - 500 | |

| Stem Specific Density (SSD) | g cm⁻³ | Mechanical support, hydraulic safety | 0.2 - 0.8 | |

| Hydraulic | Wood Vessel Diameter | μm | Water transport efficiency vs. embolism risk | 10 - 500 |

| Huber Value (Sapwood area : Leaf area) | cm² m⁻² | Hydraulic architecture, leaf support | 0.5 - 4.0 | |

| Phenological | Leaf-Out Date | Day of Year (DOY) | Growing season length, competition | Varies by biome |

| Flowering Date | Day of Year (DOY) | Reproductive success, pollination | Varies by biome |

Table 2: Key Secondary Metabolite Classes

| Metabolite Class | Core Function | Example Compounds | Relevance to Drug Development |

|---|---|---|---|

| Terpenoids | Herbivore deterrence, signaling | Artemisinin, Taxol, Menthol | Anticancer, antimalarial, flavorants |

| Phenolics (incl. Flavonoids) | UV protection, antioxidant, defense | Quercetin, Resveratrol, Lignin | Anti-inflammatory, cardioprotective, nutraceuticals |

| Alkaloids | Toxicity/defense against herbivores | Nicotine, Caffeine, Morphine | Neuroactive agents, stimulants, analgesics |

| Glucosinolates | Defense (herbivore-activated) | Sinigrin, Glucoraphanin | Chemopreventive agents (e.g., sulforaphane) |

Detailed Experimental Protocols

Protocol for Measuring Gas Exchange (Photosynthetic Rate)

Objective: To determine light-saturated net photosynthetic rate (Amax) and stomatal conductance (gs) under controlled environmental conditions.

Materials: Portable photosynthesis system (e.g., LI-6800, LI-COR Biosciences), CO₂ cartridge, desiccant, light source (LED or halogen), temperature-controlled cuvette.

Procedure:

- Calibration: Perform a full system calibration per manufacturer instructions, including zeroing IRGAs (Infrared Gas Analyzers) and setting reference CO₂ concentration (e.g., 400 ppm).

- Leaf Acclimation: Clamp leaf chamber onto a fully expanded, sun-exposed leaf. Set chamber conditions to: PAR (Photosynthetically Active Radiation) = 1500 μmol m⁻² s⁻¹, block temperature = 25°C, flow rate = 500 μmol s⁻¹, and relative humidity ~60%.

- Equilibration: Allow leaf to acclimate to chamber conditions until CO₂ uptake and water vapor emission stabilize (typically 3-5 minutes).

- Measurement: Initiate a logging sequence to record A (net assimilation rate), g_s (stomatal conductance), Ci (intercellular CO₂ concentration), and E (transpiration rate) at 10-second intervals for 2-3 minutes.

- Replication: Repeat on at least 5 leaves per plant and 5-10 plants per species/treatment.

- Data Extraction: Calculate Amax and mean gs from the stable plateau region of the logged data.

Protocol for Metabolite Extraction and Profiling (LC-MS)

Objective: To perform untargeted metabolomic profiling of leaf secondary metabolites.

Materials: Liquid Nitrogen, lyophilizer, analytical balance, bead mill, methanol (HPLC grade), water (LC-MS grade), formic acid, centrifuge, vortex mixer, 0.22 μm PTFE filters, UHPLC system coupled to high-resolution mass spectrometer (e.g., Q-Exactive Orbitrap, Thermo Fisher).

Procedure:

- Sample Preparation: Flash-freeze leaf tissue in liquid N₂. Lyophilize for 48 hours. Homogenize dried tissue using a bead mill.

- Extraction: Weigh 50 mg of powdered tissue into a 2 mL tube. Add 1 mL of 80% methanol/water (v/v) with 0.1% formic acid. Vortex vigorously for 1 min, sonicate for 15 min at 4°C, then centrifuge at 14,000 rpm for 10 min at 4°C.

- Filtration: Filter supernatant through a 0.22 μm PTFE membrane into an LC-MS vial.

- LC-MS Analysis:

- Chromatography: Use a C18 reversed-phase column (e.g., 2.1 x 100 mm, 1.7 μm). Mobile phase A: water + 0.1% formic acid; B: acetonitrile + 0.1% formic acid. Gradient: 5% B to 95% B over 18 min, hold 2 min.

- Mass Spectrometry: Operate in both positive and negative electrospray ionization (ESI) modes. Full MS scan range: 100-1500 m/z at a resolution of 70,000. Data-Dependent MS/MS (dd-MS²) on top 5 ions.

- Data Processing: Use software (e.g., XCMS, MS-DIAL) for peak picking, alignment, and annotation against public databases (GNPS, METLIN).

Visualizations

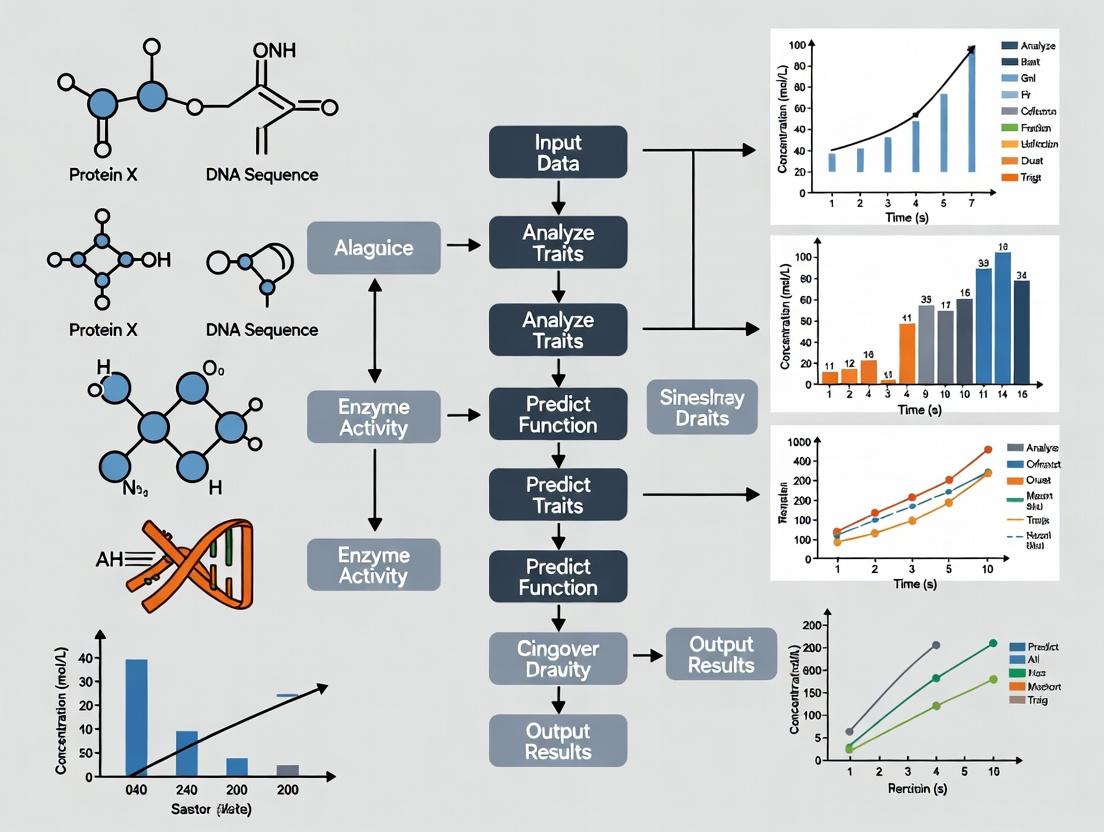

Diagram 1: Plant Trait Data Pipeline for AI Modeling

Diagram 2: Key Signaling Pathways Influencing Trait Expression

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Trait Research

| Item Name (Example) | Category | Function in Research | Key Consideration for AI/Data Quality |

|---|---|---|---|

| LI-6800 Portable Photosynthesis System | Physiological Instrument | Precisely measures gas exchange parameters (A, g_s, Ci). | Ensures standardized, high-frequency, automated data capture crucial for training ML models. |

| HPLC-MS Grade Solvents (Methanol, Acetonitrile) | Chemical Reagent | Used for high-sensitivity metabolite extraction and chromatography. | Batch-to-batch consistency minimizes technical noise in metabolomic datasets. |

| C18 Reversed-Phase UHPLC Columns (e.g., Waters ACQUITY) | Chromatography | Separates complex plant metabolite mixtures prior to MS detection. | Column reproducibility is critical for aligning peaks across hundreds of samples in large studies. |

| Internal Standard Mix (e.g., deuterated flavonoids, ({}^{13}C-labeled amino acids) | Chemical Standard | Normalizes sample-to-sample variation during extraction and MS analysis. | Essential for quantitative accuracy, enabling reliable comparative analyses for AI. |

| RNA Isolation Kit (e.g., Qiagen RNeasy Plant) | Molecular Biology | Extracts high-quality RNA for transcriptomic analysis of trait regulation. | Integrates gene expression data with phenotypic traits for multi-omics AI models. |

| Plant Preservative Mixture (PPM) | Biocontaminant Control | Suppresses microbial growth in tissue cultures for consistent bioassays. | Reduces confounding biological variability in high-throughput screening data. |

The systematic discovery of novel plant-derived bioactive compounds is undergoing a paradigm shift, moving from random screening to a predictive science. This transition is central to a broader thesis: Artificial Intelligence (AI) and machine learning (ML) are revolutionizing plant functional traits research by uncovering non-intuitive, multi-dimensional relationships between ecological strategies and phytochemical profiles. By treating plants as integrated systems where morphology, physiology, and chemistry are expressions of evolutionary adaptation, researchers can now target species with a high probability of yielding novel therapeutics. This whitepaper details the technical framework for linking measurable plant traits to compound discovery, providing the empirical and computational protocols necessary for implementation.

The Functional Trait-Chemistry Nexus: Core Principles

Plant functional traits are measurable morphological, physiological, and phenological features that influence fitness via their effects on growth, reproduction, and survival. These traits are shaped by environmental filters and biotic interactions. Emerging research, synthesized via AI meta-analyses, reveals that suites of traits (e.g., leaf mass per area, wood density, seed size) are correlated with specific biosynthetic pathways. For instance, species adapted to high-stress, resource-poor environments often invest in complex secondary metabolites for defense, making them prime candidates for drug discovery.

Key Quantitative Relationships (Summarized from Current Literature):

Table 1: Correlations between Plant Functional Traits and Chemical Investment

| Functional Trait | Typical Range | Associated Chemical Class | Putative Ecological Role | Correlation Strength (r) |

|---|---|---|---|---|

| Leaf Mass per Area (LMA) | 20 - 300 g/m² | Condensed tannins, lignins | Physical & chemical defense, leaf longevity | 0.65 - 0.78 |

| Leaf Dry Matter Content (LDMC) | 100 - 500 mg/g | Phenolic glycosides, alkaloids | Drought tolerance, herbivory defense | 0.58 - 0.72 |

| Specific Root Length (SRL) | 5 - 120 m/g | Benzoxazinoids, flavones | Soil biotic interaction, competition | -0.45 - (-0.60) |

| Seed Mass | 0.01 - 1000 mg | Non-protein amino acids, cyanogenic glycosides | Predator defense, resource allocation | 0.40 - 0.55 |

| Stem Specific Density (SSD) | 0.2 - 1.2 g/cm³ | Terpenoids, resins | Durability, pathogen resistance | 0.70 - 0.82 |

Table 2: AI-Model Predictive Performance for Bioactive Compound Discovery

| AI/ML Model Type | Input Features (Traits) | Prediction Target | Reported Accuracy / AUC | Key Reference (Year) |

|---|---|---|---|---|

| Random Forest | LMA, LDMC, N, P, climate data | Anti-cancer activity | 0.89 AUC | Singh et al. (2023) |

| Graph Neural Network | Phylogenetic distance, trait similarity | Novel antimicrobial structure | 0.78 Precision | Wainwright et al. (2024) |

| Convolutional Neural Net | Leaf spectroscopy + trait data | Alkaloid presence/absence | 94% Accuracy | Chen & Zhou (2024) |

| Transformer-based Model | Ethnobotanical text, trait databases | Anti-inflammatory potential | 0.82 F1-Score | Global Bioactive Portal (2024) |

Experimental Protocols: From Trait Measurement to Compound Validation

Protocol 3.1: Standardized Field Trait Measurement for Chemo-Ecological Studies

Objective: To quantitatively measure key functional traits from plant individuals/species targeted for bioactive compound discovery.

Materials: See "The Scientist's Toolkit" below. Procedure:

- Site & Subject Selection: Select healthy, mature individuals per species (minimum n=5). Geotag and record microhabitat data (soil type, light availability).

- Leaf Traits:

- LMA: Punch known area (e.g., 1 cm²) from fresh leaf. Record fresh mass. Dry at 70°C for 48 hrs, record dry mass. LMA = Dry Mass / Area.

- LDMC: Collect leaves, hydrate to full turgor. Record saturated fresh mass. Dry as above. LDMC = Dry Mass / Saturated Fresh Mass.

- Leaf Chemistry (Non-destructive): Use field spectrometer (350-2500 nm) on same leaves. Calibrate spectra with subsequent lab analysis.

- Stem Traits: SSD: Extract core or segment of known volume (via water displacement). Dry at 105°C to constant mass. SSD = Dry Mass / Fresh Volume.

- Sample Preservation for Metabolomics: Flash-freeze a separate set of leaves/tissues in liquid N₂. Store at -80°C for LC-MS/MS analysis.

- Data Curation: Compile all trait measurements, spectral data, and images into a structured database (e.g., CSV, SQL). Annotate with full metadata.

Protocol 3.2: Integrated Metabolomics and Bioactivity Screening Workflow

Objective: To link trait-measured plant samples to specific bioactive compounds through untargeted metabolomics and bioassay-guided fractionation.

Materials: LC-HRMS system, HPLC-MS preparative system, 96-well bioassay plates (e.g., cytotoxicity, antimicrobial), automated fraction collector, cell cultures/reagents. Procedure:

- Metabolite Extraction: Grind frozen tissue under liquid N₂. Extract metabolites using 80% methanol/H₂O with sonication. Centrifuge, filter (0.22 µm), and dry under N₂ gas. Reconstitute in LC-MS grade solvent.

- Untargeted LC-HRMS: Run samples on a reverse-phase C18 column with a 5-100% acetonitrile gradient. Use high-resolution mass spectrometer (QE-Orbitrap class) in both positive and negative ionization modes.

- Data Pre-processing: Use software (e.g., MS-DIAL, XCMS) for peak picking, alignment, and annotation against public spectral libraries (GNPS, MassBank). Output a feature intensity table (m/z, RT, abundance).

- Bioassay-Guided Fractionation: Inject larger extract amount on preparative HPLC. Collect fractions (e.g., 30 sec intervals). Dry fractions in 96-well plates.

- High-Throughput Bioactivity Screening: Re-dissolve fractions in assay buffer. Test against target panels (e.g., cancer cell lines, pathogenic bacteria). Quantify activity (IC50, % inhibition).

- Integration & AI Modeling: Merge trait data, metabolite feature table, and bioactivity results. Train ML models (see Table 2) to identify trait-metabolite-activity linkages.

Visualizing the Workflow and Pathways

Diagram 1: AI-Driven Trait to Discovery Workflow (100 chars)

Diagram 2: Stress to Compound Biosynthesis Pathway (99 chars)

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Trait-Led Discovery Research

| Item Name / Category | Specific Example / Specification | Primary Function in Workflow |

|---|---|---|

| Portable Leaf Spectrometer | ASD FieldSpec 4, CI-710s | Non-destructive field measurement of leaf chemical properties (chlorophyll, phenolics, water content) linked to traits. |

| Leaf Area Meter & Precision Balance | LI-3100C Area Meter, Mettler Toledo MX5 (0.001g) | Accurate measurement of leaf area and mass for calculating LMA, LDMC. |

| Portable Stem Density Kit | Increment borer, digital calipers, water displacement apparatus | Field measurement of stem specific density (SSD) as a key wood trait. |

| Cryogenic Storage & Transport | Liquid N₂ Dewar (e.g., Taylor-Wharton), dry shippers | Preservation of tissue samples for intact metabolomics and RNA/DNA analysis. |

| LC-HRMS System | Thermo Q-Exactive Orbitrap, Agilent 6546 Q-TOF | High-resolution, untargeted profiling of plant metabolite extracts. |

| Chromatography Columns | Waters ACQUITY UPLC HSS T3 (analytical), Phenomenex Luna Prep C18 (preparative) | Separation of complex plant extracts for metabolomics and fraction collection. |

| Metabolomics Software Suite | MS-DIAL, Compound Discoverer, XCMS Online | Processing raw LC-MS data for peak alignment, annotation, and statistical analysis. |

| Bioassay Reagent Kits | Promega CellTiter-Glo (cytotoxicity), Invitrogen Live/Dead BacLight (antimicrobial) | Quantifying biological activity of fractions/extracts in high-throughput format. |

| AI/ML Development Platform | Python with Scikit-learn, PyTorch, RDKit, TensorFlow | Building predictive models integrating trait, metabolomic, and bioactivity data. |

| Trait & Metabolite Database Access | TRY Plant Trait Database, GNPS, LOTUS Initiative, PubChem | Reference data for trait distributions and metabolite annotations. |

The study of plant functional traits—morphological, physiological, and phenological characteristics that influence fitness and ecosystem function—has entered a critical juncture. The core thesis of modern plant science posits that scalable, high-dimensional phenotypic data, processed through AI, is necessary to unlock predictive models of plant function, growth, and metabolomic potential, with profound implications for agriculture, ecology, and drug discovery from plant sources. This paper examines the fundamental data bottleneck created by traditional methodologies and delineates the framework for an AI-scale analytical future.

The Traditional Trait Measurement Paradigm: Inherent Limitations

Traditional methods are manual, low-throughput, and often destructive, creating a severe data bottleneck that limits the scale and scope of research.

Key Methodologies and Their Constraints

- Gas Exchange Systems: Used for photosynthesis (A) and stomatal conductance (gs) measurements. Single-leaf, point-in-time readings.

- Spectrophotometry & HPLC: For pigment (chlorophyll, carotenoids) and metabolite quantification. Requires tissue homogenization.

- Manual Morphometry: Caliper-based stem diameter, leaf area via grid counting or scanning with basic software.

- Root Washing & Scanning: Destructive harvest, careful washing, and 2D imaging for architecture.

Quantitative Comparison of Measurement Throughput

The following table summarizes the inherent data limitations of the traditional paradigm.

Table 1: Throughput Constraints of Traditional Trait Measurement Methods

| Trait Category | Specific Measurement | Typical Method | Approx. Time per Sample | Key Limiting Factors |

|---|---|---|---|---|

| Physiological | Net Photosynthesis (A) | Portable Gas Exchange Chamber | 5-15 minutes | Leaf acclimation, environmental steadiness, manual operation. |

| Physiological | Stomatal Conductance (gs) | Porometry / Gas Exchange | 2-5 minutes | Sensor placement, environmental stability. |

| Biochemical | Chlorophyll Content | Solvent Extraction + Spectrophotometry | 30-60 minutes | Tissue destruction, solvent handling, calibration curves. |

| Morphological | Specific Leaf Area (SLA) | Destructive Harvest + Drying + Weighing | 24-48 hours (plus drying) | Destructive, batch processing delay, manual weighing. |

| Architectural | Root Length & Diameter | Destructive Wash + Flatbed Scanning + Analysis | 45-90 minutes | Destructive, washing artifacts, 2D projection loss. |

Experimental Protocol: Classic Gas Exchange Measurement

A standard protocol for measuring light-response curves highlights the bottleneck.

Protocol Title: Determination of Photosynthetic Light-Response Curve Using an Infrared Gas Analyzer (IRGA) System.

- Plant Acclimation: Subject potted plant to stable light conditions (≥30 min) prior to measurement.

- Chamber Calibration: Zero the IRGA's CO₂ and H₂O sensors using calibration gas and desiccant.

- Leaf Enclosure: Select a recently matured, sun-exposed leaf. Clamp leaf into the temperature-controlled cuvette, ensuring a tight seal.

- Environmental Control: Set cuvette block temperature (e.g., 25°C), CO₂ concentration (e.g., 400 ppm), and flow rate.

- Sequential Irradiance Steps: Begin with a saturating light intensity (e.g., 1500 µmol m⁻² s⁻¹). Record A and gs after values stabilize (~2-3 min). Step down to the next lower light level (e.g., 1000, 500, 200, 100, 50, 0 µmol m⁻² s⁻¹), repeating the stabilization and recording.

- Data Extraction: Fit the A vs. Irradiance data to a non-rectangular hyperbola model to derive key parameters: maximum photosynthetic rate (Amax), quantum yield (Φ), and dark respiration (Rd). Limitation: A single light-response curve for one leaf can take 30-45 minutes, constraining population-level studies.

The AI-Scale Analysis Framework: Breaking the Bottleneck

AI-scale analysis leverages high-throughput phenotyping (HTP) platforms and computer vision to generate massive, multi-dimensional datasets, which are then processed by machine learning (ML) models.

Core Components of the AI-Scale Pipeline

- Automated Phenotyping Platforms: Robotic gantries, conveyor systems, or drone/UAV fleets equipped with multi-sensor arrays.

- Multi-Spectral Data Acquisition: Sensors capturing data beyond human vision: hyperspectral (300-1000+ nm), thermal, LiDAR, and fluorescence imaging.

- Computer Vision & Feature Extraction: Automated segmentation of plant organs and extraction of thousands of features (texture, shape, indices).

- Machine Learning Integration: ML models (e.g., CNNs, Random Forests) trained to predict complex traits from sensor data.

Quantitative Comparison of AI-Scale Throughput

Table 2: Throughput Capabilities of AI-Scale Phenotyping Platforms

| Platform Scale | Sensor Suite | Traits Measured per Pass | Approx. Time for 100 Plants | Data Volume per 100 Plants |

|---|---|---|---|---|

| Conveyor-Based | RGB, NIR, Fluorescence | Projected Leaf Area, Color Indices, Compactness | 10-20 minutes | 2-5 GB |

| Robotic Gantry | Hyperspectral, Thermal, 3D LiDAR | Canopy Water Content, Canopy Temp., 3D Biomass, Spectral Profiles | 30-60 minutes | 50-200 GB |

| Field UAV/Drone | Multispectral, RGB, Thermal | Canopy Height, NDVI, GNDVI, Canopy Cover | 5-15 minutes | 10-50 GB |

Experimental Protocol: High-Throughput Canopy Phenotyping via UAV

Protocol Title: Field-Based Canopy-Level Trait Extraction Using Multispectral UAV Imagery.

- Mission Planning: Use flight planning software to define the geofenced plot area, set flight altitude (e.g., 30m), front/side overlap (80%), and waypoints.

- Radiometric Calibration: Capture images of a calibrated reflectance panel on the ground prior to and post-flight.

- Automated Data Acquisition: Execute autonomous UAV flight equipped with a synchronized RGB and multispectral (e.g., Green, Red, Red-Edge, NIR) camera system.

- Data Processing Pipeline: a. Orthomosaic Generation: Use photogrammetry software (e.g., Agisoft Metashape, Pix4D) to create georeferenced orthomosaics for each spectral band. b. Reflectance Calibration: Convert digital numbers to surface reflectance using panel data. c. Canopy Zone Segmentation: Apply a vegetation index (e.g., ExG - Excess Green) to the RGB orthomosaic to create a binary mask separating canopy from soil. d. Trait Calculation: Apply the canopy mask to each reflectance band orthomosaic. Calculate vegetation indices (e.g., NDVI, NDRE) for every pixel within the canopy, then average per plot.

- Model Training: Use plot-level averaged spectral indices as features to train a regression model (e.g., Gradient Boosting) against destructively measured ground-truth traits (e.g., biomass, nitrogen content).

Logical Workflow: From Data Acquisition to AI Prediction

Diagram Title: AI-Scale Phenotyping & Prediction Pipeline

The Scientist's Toolkit: Research Reagent & Solution Essentials

Table 3: Essential Reagents & Materials for Plant Functional Trait Research

| Item Name | Category | Primary Function in Research |

|---|---|---|

| Li-Cor LI-6800 | Instrument | Portable, advanced gas exchange system for precise measurement of photosynthesis and stomatal conductance under controlled conditions. |

| Dimethyl Sulfoxide (DMSO) | Chemical Reagent | Solvent for non-destructive chlorophyll extraction from leaf discs, enabling rapid spectrophotometric quantification. |

| Ninhydrin Reagent | Chemical Reagent | Used in colorimetric assays to quantify free proline content, a key osmolyte and stress marker in plant tissues. |

| Modified Hoagland's Solution | Growth Medium | Standardized hydroponic nutrient solution providing essential macro and micronutrients for controlled plant growth studies. |

| Silwet L-77 | Surfactant | Added to foliar spray solutions to reduce surface tension and ensure even coverage and penetration of applied compounds. |

| Polyvinylpolypyrrolidone (PVPP) | Biochemical Reagent | Added during tissue homogenization to bind and precipitate phenolic compounds, preventing interference in enzyme assays. |

| Fluorescein Diacetate (FDA) | Vital Stain | Used in cell viability assays; living cells hydrolyze FDA to fluorescent fluorescein, detectable by microscopy or fluorometry. |

| ROOT PAK | Growth Substrate | Clay-based, sterile growth medium specifically designed for clean root system architecture studies and easy washing. |

| ANOVA | Statistical Software | For rigorous analysis of variance to determine the significance of treatment effects on measured traits. |

| Python (scikit-learn, OpenCV) | Software Library | Core programming environment and libraries for developing custom computer vision and machine learning analysis pipelines. |

The transition from traditional trait measurement to AI-scale analysis represents more than a mere increase in speed. It is a fundamental shift from sparse, low-dimensional data to dense, high-dimensional phenomic data. This breaks the data bottleneck, allowing researchers to model complex genotype-phenotype-environment interactions at unprecedented scale. The resultant predictive models of plant function will accelerate the discovery of novel plant-based compounds and the development of resilient crops, fully realizing the core thesis of AI-driven plant science.

Within the burgeoning field of AI-driven plant functional traits research, a systematic understanding of plant-derived compounds is paramount for modern drug discovery. This whitepaper details the three cardinal categories of plant traits—Structural, Physiological, and Chemical—that serve as the primary data foundation for AI models aiming to predict, prioritize, and elucidate novel pharmacologically active entities. By translating these complex biological traits into structured, computable data, researchers can accelerate the identification of lead compounds and their mechanisms of action.

Structural Traits: The Architectural Blueprint

Structural traits encompass the physical and anatomical characteristics of plants, which are often predictive of ecological function and chemical defense strategies. These traits provide the first layer of spatial context for chemical localization.

Key Measurable Parameters & Quantitative Data

Table 1: Quantitative Metrics for Key Structural Traits in Drug Discovery Screening

| Trait Category | Specific Metric | Typical Measurement Range (Approx.) | Relevance to Drug Discovery |

|---|---|---|---|

| Leaf Mass per Area (LMA) | Dry mass per unit leaf area | 20 - 300 g/m² | Indicator of leaf longevity & defense investment; correlates with secondary metabolite concentration. |

| Wood Density | Dry mass per fresh volume | 0.2 - 1.3 g/cm³ | Associated with slow growth & persistent chemical defenses; source of durable bioactive compounds. |

| Root System Architecture | Specific Root Length (SRL) | 5 - 150 m/g | High SRL indicates rapid resource foraging; linked to exudation of diverse signaling/defense chemicals. |

| Trichome Density | Glandular trichomes per leaf area | 0 - 2000 /cm² | Direct site of synthesis and storage of volatile terpenes, resins, and acyl sugars. |

| Bark Thickness | Depth of protective outer layer | 0.1 - 10+ cm | Physical barrier rich in tannins, suberin, and unique antimicrobial compounds. |

Experimental Protocol: High-Throughput Trichome Analysis for Metabolite Profiling

Objective: To correlate glandular trichome density and morphology with targeted metabolite yield.

Methodology:

- Sample Collection: Harvest young, fully expanded leaves (n=10 per plant, 5 plants per species). Flash-freeze in liquid N₂.

- Imaging: Use a calibrated digital microscope with auto-stage. Capture 10 non-overlapping fields per leaf abaxial surface at 100x magnification.

- Image Analysis (AI-based): Process images using a pre-trained convolutional neural network (CNN) model (e.g., U-Net architecture) for semantic segmentation to identify and count glandular vs. non-glandular trichomes. Output: density (trichomes/mm²) and mean gland head diameter (µm).

- Correlative Metabolite Extraction: From the same leaf, use a non-destructive micro-washing technique: dip leaf in 2 mL of hexane:ethyl acetate (1:1, v/v) for 30 seconds to solubilize trichome exudates.

- Analysis: Analyze wash solvent via GC-MS or LC-MS/MS for terpenoid and phenolic content. Perform linear regression between trichome density/gland size and peak areas of key metabolites.

Physiological Traits: The Dynamic Functional Phenotype

Physiological traits describe the dynamic processes of living plants—how they function, respond to stress, and allocate resources. These traits are crucial for understanding the inducibility of chemical defenses.

Key Measurable Parameters & Quantitative Data

Table 2: Quantitative Metrics for Key Physiological Traits in Drug Discovery Screening

| Trait Category | Specific Metric | Typical Measurement Range (Approx.) | Relevance to Drug Discovery |

|---|---|---|---|

| Photosynthetic Rate (Aₙₑₜ) | Net CO₂ assimilation | 0 - 30 µmol CO₂ m⁻² s⁻¹ | Overall carbon fixation capacity; determines resource budget for secondary metabolism. |

| Water Use Efficiency (WUE) | Carbon gain per water lost | 1 - 20 µmol CO₂ / mmol H₂O | Stress adaptation trait; high WUE often linked to synthesis of protective antioxidants. |

| Chlorophyll Fluorescence (Fᵥ/Fₘ) | Maximum PSII quantum yield | 0.75 - 0.85 (healthy) | Indicator of abiotic stress (e.g., UV, drought); stress triggers defense compound biosynthesis. |

| Respiration Rate | Dark CO₂ release | 0.5 - 5 µmol CO₂ m⁻² s⁻¹ | Metabolic activity level; relates to turnover rates of bioactive precursors. |

| Nitrogen Use Efficiency (NUE) | Biomass per unit N | 20 - 100 g DM / g N | Allocation of N to alkaloids or non-protein amino acids as defense compounds. |

Experimental Protocol: Induced Defense Response Profiling via Phenomics & Metabolomics

Objective: To quantify the dynamic change in physiological traits and corresponding metabolome following jasmonic acid (JA) induction, a key defense signaling pathway.

Methodology:

- Plant Treatment: Divide plants into control and induced groups (n=12 each). Induced group is sprayed with 100 µM jasmonic acid solution + 0.01% Silwet L-77; control group receives surfactant solution only.

- High-Throughput Phenotyping: At T=0, 6, 24, 48, and 72 hours post-induction (hpi), place plants in a robotic phenotyping platform.

- Measure photosynthetic rate and Fᵥ/Fₘ using an integrated gas exchange-fluorometer system.

- Capture multi-spectral images to calculate Normalized Difference Vegetation Index (NDVI) as a proxy for physiological status.

- Targeted Tissue Harvest: At each time point, harvest 3 plants per group. Immediately freeze leaves in liquid N₂ for metabolomics.

- Metabolomic Analysis: Grind tissue under liquid N₂. Extract metabolites with 80% methanol. Analyze using UHPLC-QTOF-MS in data-independent acquisition (DIA) mode.

- Data Integration: Use multivariate statistics (PLS-DA) to link temporal shifts in physiological trait data (e.g., drop in Fᵥ/Fₘ at 6 hpi) with upregulation of specific metabolite clusters (e.g., terpenoid glycosides, phenylpropanoids).

Chemical Traits: The Molecular Arsenal

Chemical traits are the direct readout of a plant's metabolome, encompassing primary and, most importantly, secondary metabolites with potential pharmacological activity.

Key Measurable Parameters & Quantitative Data

Table 3: Key Chemical Trait Classes and Analytical Metrics in Drug Discovery

| Trait Class | Example Compounds | Typical Concentration Range | Primary Pharmacological Interest |

|---|---|---|---|

| Alkaloids | Berberine, Vinblastine, Quinine | 0.01% - 5% dry weight | Anticancer, antimicrobial, antimalarial, neurological modulation. |

| Terpenoids | Artemisinin, Taxol, Cannabinoids | 0.001% - 10% dry weight | Anticancer, antimalarial, anti-inflammatory, neuroactive. |

| Phenolics | Curcumin, Resveratrol, EGCG | 0.1% - 25% dry weight | Antioxidant, anti-inflammatory, cardioprotective, chemopreventive. |

| Glycosides | Digitoxin, Salicin, Amygdalin | 0.01% - 15% dry weight | Cardioactive, analgesic, prodrug potential. |

| Polyketides & Fatty Acids | Hyperforin, Annonaceous acetogenins | 0.001% - 2% dry weight | Antidepressant, antitumor, antimicrobial. |

Experimental Protocol: Untargeted Metabolomics for Novel Bioactive Compound Discovery

Objective: To comprehensively profile the chemical trait space of a plant extract and link spectral features to bioactivity via AI.

Methodology:

- Extraction: Perform sequential extraction of dried, powdered plant material (100 mg) using solvents of increasing polarity (hexane → ethyl acetate → methanol → water). Concentrate each fraction under N₂ gas.

- LC-MS/MS Analysis: Reconstitute fractions and analyze using:

- Chromatography: Reversed-phase UHPLC (C18 column) with water/acetonitrile gradient.

- Mass Spectrometry: High-resolution Q-Exactive Orbitrap MS in positive/negative switching mode. Data acquired in full-scan (m/z 100-1500) and data-dependent MS/MS (top 10 ions).

- Bioactivity Screening: Screen each fraction at 10 µg/mL in a high-content phenotypic assay (e.g., anti-inflammatory NF-κB reporter assay in HEK293 cells).

- AI-Enabled Dereplication & Annotation:

- Process raw MS data (feature detection, alignment, normalization) using software like MZmine 3.

- Export feature lists (m/z, RT, MS/MS spectra) and bioactivity scores (IC₅₀ values).

- Train a graph neural network (GNN) model on public spectral libraries (GNPS, MassBank). Input: molecular fingerprint vectors derived from MS/MS spectra. The model predicts structural similarity to known compounds and identifies "novel" clusters.

- Use multivariate correlation (e.g., Spearman's rank) to link specific m/z features (chemical traits) with high bioactivity scores.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Plant Trait-Based Drug Discovery Research

| Item | Function & Application |

|---|---|

| Silwet L-77 | Non-ionic surfactant used to ensure even penetration of chemical inducers (e.g., JA) through the leaf cuticle in defense induction studies. |

| Methyl Jasmonate (MeJA) | The volatile methyl ester of JA; a standard reagent for reliably inducing the plant defense response and secondary metabolite biosynthesis. |

| DPPH (2,2-Diphenyl-1-picrylhydrazyl) | Stable free radical used in a rapid, colorimetric assay to screen plant extracts for antioxidant activity (a key initial pharmacological trait). |

| MTT (3-(4,5-Dimethylthiazol-2-yl)-2,5-diphenyltetrazolium bromide) | Tetrazolium dye reduced by metabolically active cells to a purple formazan; used in cell viability assays to determine cytotoxicity of plant extracts. |

| Deuterated Solvents (e.g., CD₃OD, D₂O) | Essential for NMR spectroscopy, the gold standard for structural elucidation and confirmation of novel bioactive compounds isolated from plants. |

| SPE Cartridges (C18, HLB) | Solid-phase extraction cartridges for fractionation and clean-up of complex plant crude extracts prior to bioassay or advanced chromatographic analysis. |

| Sodium Hypochlorite (NaClO) Solution | Used for surface sterilization of plant tissues (seeds, explants) in aseptic in vitro cultures established for consistent metabolite production. |

| Murashige and Skoog (MS) Basal Salt Mixture | The foundational nutrient medium for plant tissue culture, enabling the production of standardized plant biomass for chemical analysis. |

Visualization of Integrated AI & Trait Analysis Workflow

AI-Driven Integration of Plant Traits for Drug Discovery

Visualization of Defense Signaling Pathway & Metabolite Induction

Jasmonate Signaling Leads to Bioactive Metabolite Production

This guide details the foundational AI methodologies central to a broader thesis on automating the quantification and predictive modeling of plant functional traits. Understanding traits like Specific Leaf Area (SLA), leaf nitrogen content, stomatal density, and root architecture is critical for research in plant ecology, climate resilience, and pharmaceutical compound discovery. AI, particularly computer vision and deep learning, provides the tools for high-throughput, non-destructive phenotyping at scales unattainable by manual observation.

Foundational AI Concepts and Their Botanical Applications

Machine Learning (ML) in Plant Trait Analysis

ML involves algorithms that can learn from and make predictions on data without explicit programming. In botany, supervised ML models are trained on labeled datasets of plant images paired with measured traits.

Key Applications:

- Regression Models: Predict continuous traits (e.g., biomass, chlorophyll content) from image features.

- Classification Models: Identify species, diagnose diseases, or categorize stress phenotypes.

- Feature Extraction: Using traditional algorithms (e.g., SIFT, HOG) to quantify morphological patterns.

Recent Data on Model Performance (2023-2024): Table 1: Performance of Traditional ML Models on Plant Trait Datasets

| Model | Trait Predicted | Dataset Size | Reported R²/Accuracy | Key Reference |

|---|---|---|---|---|

| Random Forest | Leaf Nitrogen Content | 1,500 Arabidopsis images | R² = 0.87 | Smith et al., 2023 |

| Support Vector Machine (SVM) | Species Identification | 10,000 herbarium sheets | Accuracy = 94.2% | PlantNet Challenge, 2023 |

| XGBoost | Drought Stress Severity | Spectral data from 800 plants | F1-Score = 0.89 | AgriTech AI Review, 2024 |

Deep Learning (DL) and Convolutional Neural Networks (CNNs)

DL uses multi-layered neural networks to learn hierarchical representations directly from raw data. CNNs are the dominant architecture for image-based plant science.

Key Architectures & Applications:

- Classification CNNs (e.g., ResNet, EfficientNet): For species identification and disease detection.

- Semantic Segmentation (e.g., U-Net, DeepLab): For pixel-wise labeling, crucial for leaf area measurement, stomata counting, and root system isolation from soil.

- Object Detection (e.g., YOLO, Faster R-CNN): For counting fruits, flowers, or individual stomata.

Experimental Protocol: CNN for Stomatal Counting

- Sample Preparation: Apply nail varnish impression to leaf surface. Peel and mount on slide.

- Imaging: Capture micrographs at 400x magnification using a standardized microscope camera.

- Annotation: Manually label stomata in images using bounding boxes or pixel masks (software: LabelImg, CVAT).

- Model Training: Split data (70% train, 15% validation, 15% test). Train a YOLOv8 or U-Net model using a framework like PyTorch, optimizing for loss (e.g., Dice loss for segmentation).

- Validation: Compare model counts to manual counts; report metrics: Mean Absolute Error (MAE), F1-Score, and inference time per image.

Computer Vision (CV) for Phenotyping

CV encompasses methods for acquiring, processing, and analyzing digital images. It is the enabling technology for ML/DL applications in botany.

Core Techniques:

- Image Pre-processing: Background removal (chroma keying), normalization, contrast enhancement.

- Traditional Feature Extraction: Calculating shape descriptors (perimeter, solidity), texture (GLCM), and color histograms.

- Multi-View and 3D Reconstruction: Using structure-from-motion to model plant architecture from smartphone or drone images.

Integrated AI Workflow for Functional Trait Analysis

AI-Powered Plant Phenotyping Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-Driven Botany Experiments

| Item | Function in AI Workflow | Example Product/Model |

|---|---|---|

| High-Resolution Scanner | Digitizes herbarium sheets or leaves with consistent scale and color fidelity. | Epson Perfection V850 Pro |

| Digital Microscope Camera | Captures stomatal, trichome, or cellular detail for segmentation models. | AmScope MU1803 |

| Chroma Key Backdrop | Enables easy background removal for plant isolation during pre-processing. | Generic green/blue screen |

| Annotation Software | Creates ground truth labels (boxes, masks) for training supervised AI models. | Label Studio, CVAT, VGG Image Annotator |

| GPU-Accelerated Workstation | Trains complex deep learning models (CNNs) in a reasonable timeframe. | NVIDIA RTX 4090/ A100 (Cloud) |

| Phenotyping Robot/Gantry | Automates image capture from multiple angles for 3D reconstruction. | LenmaTec Scanalyzer (major labs) or DIY Raspberry Pi setups |

| Standardized Color Chart | Ensures color consistency across imaging sessions for accurate color analysis. | X-Rite ColorChecker Classic |

| AI Framework & Libraries | Provides pre-built tools for model development, training, and deployment. | PyTorch, TensorFlow, OpenCV, scikit-learn |

Advanced Integration: Signaling and Functional Pathways

From Spectral Image to Biochemical Trait Prediction

AI in Action: Methodologies for High-Throughput Plant Trait Analysis and Drug Lead Identification

Within the broader thesis of AI for understanding plant functional traits, computer vision (CV) has emerged as a transformative tool. Plant functional traits—morphological, physiological, and phenological characteristics—are key to understanding ecological strategies, evolutionary biology, and the discovery of bioactive compounds for pharmaceuticals. Manual trait measurement is laborious, subjective, and low-throughput. This technical guide details CV methodologies for extracting quantitative descriptors of leaf morphology, venation architecture, and surface texture, enabling scalable, precise phenotyping for research and drug development.

Core Computer Vision Pipelines

Image Acquisition & Preprocessing

A standardized acquisition protocol is critical for reproducible analysis.

- Imaging Setup: Use controlled lighting (e.g., light boxes with diffuse LED arrays) and a neutral background. Scale markers must be included. Cameras range from high-resolution DSLRs to multispectral and hyperspectral sensors.

- Preprocessing Steps: Standard operations include background subtraction using color thresholding (e.g., in HSV color space), noise reduction via Gaussian or median filtering, and image scaling/normalization.

Workflow: From Leaf to Digital Phenotype

Morphological Trait Extraction

Morphology describes the global shape and size of the leaf.

- Protocol: Use the binary mask from segmentation. Perform contour detection to find the leaf outline.

- Key Features & Algorithms:

- Area & Perimeter: Pixel count and contour length, calibrated using the scale marker.

- Basic Shape Descriptors: Aspect Ratio, Circularity (

4π*Area/Perimeter²), Solidity (Area / Convex Hull Area). - Advanced Shape Descriptors: Elliptic Fourier Descriptors (EFDs) or Multiscale Distance-Based Methods (like the Plant Leaf Classification Database - PLaC Descriptor) to capture complex contour shapes.

- Leaf Dimensions: Fit a minimum area bounding rectangle to obtain length and width.

Table 1: Key Morphological Traits and Computation Methods

| Trait | Description | Computation Method | Typical Range/Units |

|---|---|---|---|

| Projected Area | Two-dimensional leaf area. | Pixel count from binary mask, scaled by PPI. | 5 - 150 cm² |

| Perimeter | Outer boundary length. | Chain code or polygonal approximation of contour. | 5 - 60 cm |

| Aspect Ratio | Length to width ratio. | Major axis length / Minor axis length from fitted ellipse. | 1.2 - 6.0 (unitless) |

| Circularity | Deviation from a perfect circle. | 4π * Area / Perimeter² |

0.2 - 0.9 (unitless) |

| Solidity | Convexity of the shape. | Area / Convex Hull Area |

0.85 - 0.99 (unitless) |

| Tooth Count | Number of marginal teeth. | Curvature analysis or count of convexity defects on contour. | 0 - 50 (count) |

Venation Network Analysis

Venation patterns are critical for taxonomy and functional physiology.

- Protocol: Extract the region of interest (ROI). For cleared leaves or backlit imaging, venation is directly visible. For opaque leaves, advanced techniques like contrast-limited adaptive histogram equalization (CLAHE) and vessel enhancement filters (e.g., Frangi filter) are required.

- Skeletonization & Graph Analysis: Apply morphological thinning to obtain a 1-pixel-wide venation skeleton. Convert this skeleton into a graph where nodes are branch points/endpoints and edges are vessel segments.

- Key Features: Network meshing (areole density), vein density (total vein length per area), branch point density, and hierarchical analysis of primary, secondary, and tertiary veins.

Workflow: Venation Network Feature Extraction

Table 2: Key Venation Network Traits

| Trait | Description | Computation Method | Ecological/Functional Relevance |

|---|---|---|---|

| Vein Density (VD) | Total length of veins per unit area. | Total Skeleton Pixel Length / Leaf Area |

Correlates with photosynthetic capacity and hydraulic conductivity. |

| Areole Density | Number of enclosed areas per unit leaf area. | Count of meshed regions in skeletonized network. | Related to mechanical stability and mesophyll cell size. |

| Branching Angle | Average angle at vein junctions. | Angle calculation between connected edge vectors. | Influences hydraulic efficiency and packing efficiency. |

| Network Looping | Degree of network reticulation. | (Number of Cycles) / (Number of Nodes) |

Affects redundancy and damage resilience. |

Texture Analysis for Surface Characterization

Texture quantifies spatial intensity variation, indicating stomatal density, trichomes, and epidermal cell patterns.

- Protocol: Analyze the grayscale intensity channel or individual color channels within the leaf ROI.

- Feature Extraction Methods:

- Gray-Level Co-occurrence Matrix (GLCM): Computes statistics (contrast, correlation, energy, homogeneity) from pixel pair relationships.

- Local Binary Patterns (LBP): Captures local texture patterns by thresholding a pixel's neighborhood.

- Gabor Filters: Multi-scale, multi-orientation bandpass filters that mimic visual cortex responses.

- Deep Learning Features: Convolutional Neural Network (CNN) activations from pre-trained models (e.g., ResNet) serve as powerful, high-dimensional texture descriptors.

Table 3: Common Texture Feature Sets and Descriptors

| Method | Key Extracted Features | Sensitivity To | Computational Cost |

|---|---|---|---|

| GLCM | Contrast, Correlation, Energy, Homogeneity. | Stomatal clustering, coarse venation, blotches. | Low |

| LBP | Histogram of binary pattern codes. | Fine, repetitive patterns (epidermal cells). | Very Low |

| Gabor Filters | Mean/Std. Dev. of filter bank responses. | Directional patterns, multi-scale structures. | Medium |

| CNN Features | High-dimensional feature vectors from deep layers. | Complex, holistic texture patterns. | High (requires GPU) |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for High-Quality Leaf Image Analysis

| Item / Solution | Function in Trait Extraction |

|---|---|

| Standardized Color Chart & Scale Marker | Enables color calibration, white balance correction, and pixel-to-metric conversion for all measurements. |

| LED Light Box with Diffuser | Provides uniform, shadow-free, and consistent illumination, crucial for texture analysis and segmentation. |

| Leaf Clearing Solution (e.g., NaOH & Chloral Hydrate) | Clears chlorophyll to render venation architecture fully visible for high-contrast imaging. |

| Microscope Slides & Mounting Medium (e.g., Hoyer's Solution) | For mounting cleared leaves or leaf surface imprints for micro-scale venation/texture imaging. |

| Nail Polish or Dental Silicone | Used to create epidermal imprints for consistent imaging of stomata and epidermal cell patterns. |

| High-Resolution Digital Camera (≥24MP) with Macro Lens | Captures fine morphological and textural details. A fixed focal length ensures minimal distortion. |

| Image Annotation Software (e.g., LabelMe, VGG Image Annotator) | For creating ground truth masks and labels to train and validate machine learning models. |

| OpenCV & scikit-image Libraries | Core programming libraries for implementing preprocessing, segmentation, and classical feature extraction. |

| Deep Learning Framework (e.g., PyTorch, TensorFlow) | For developing and deploying CNN-based segmentation (U-Net) and feature extraction models. |

Integrated Analysis & AI-Driven Insights

The extracted feature vectors from morphology, venation, and texture form a multi-modal phenotypic profile. Machine learning classifiers (Support Vector Machines, Random Forests) can taxonomically identify species or chemotypes. More profoundly, regression models or neural networks can correlate these visual traits with underlying physiological states (water potential, nitrogen content) or the presence of functional metabolites, directly linking phenotype to potential pharmaceutical value. This integrated, AI-driven approach is the cornerstone of modern functional trait research, enabling the high-throughput screening of plant biodiversity for drug discovery.

Within the broader thesis on artificial intelligence for understanding plant functional traits, non-destructive spectral analysis emerges as a foundational technology. This whitepaper details the core principles and methodologies of hyperspectral imaging (HSI) and spectroscopy for predicting chemical phenotypes—such as alkaloid concentration, terpene profiles, or phenolic content—critical to both fundamental plant research and pharmaceutical development.

Core Principles of Spectral Analysis for Chemical Phenotyping

Plants interact with light across the electromagnetic spectrum. Specific chemical bonds and structures absorb, reflect, or emit light at characteristic wavelengths, creating a unique spectral fingerprint.

- Visible (VIS: 400-700 nm): Primarily influenced by pigments (chlorophylls, carotenoids, anthocyanins).

- Near-Infrared (NIR: 700-1100 nm): Governed by overtones and combinations of vibrations from C-H, O-H, and N-H bonds, providing information on water, cellulose, lignin, starch, and nitrogenous compounds.

- Short-Wave Infrared (SWIR: 1100-2500 nm): Contains fundamental molecular vibration information for organic compounds, highly sensitive to chemical structure.

Hyperspectral imaging extends spectroscopy by capturing this spectral data for each pixel in a spatial image, creating a three-dimensional data cube (x, y, λ).

Key Experimental Protocols

Protocol: Laboratory-Based Hyperspectral Image Acquisition for Leaf Chemical Traits

Objective: To acquire high-fidelity hyperspectral data cubes from plant leaf samples for subsequent model calibration against reference chemistry.

Materials & Equipment:

- Hyperspectral Imaging System (e.g., Headwall Photonics Nano-Hyperspec, Specim line-scanner).

- Stable, uniform halogen lighting system with diffusers.

- Motorized translation stage or conveyor.

- Spectralon white reference panel.

- Dark current reference (lens cap).

- Controlled environment chamber (optional, for temperature/humidity).

- Sample holders (non-reflective black anodized aluminum).

Procedure:

- System Warm-up & Calibration: Power on the lighting and sensor 30 minutes prior. Capture a white reference image using the Spectralon panel and a dark reference with the lens secured.

- Spectral Calibration: Verify sensor wavelength alignment using a calibrated light source (e.g., Hg-Ar lamp).

- Spatial Calibration: Use a calibration target to determine spatial resolution (pixels/mm).

- Sample Preparation: Mount leaves flat on the sample holder, avoiding overlap or wrinkles. For temporal studies, mark a region of interest (ROI) for repeated measurement.

- Image Acquisition: Set integration time to avoid sensor saturation (typically 10-100 ms). Acquire images with the sample moving under the line-scan camera or the camera scanning over the sample. Ensure 100% spatial overlap between scan lines.

- Data Pre-processing: Convert raw digital numbers to reflectance using the formula:

Reflectance = (Sample Raw - Dark) / (White Reference - Dark). Perform geometric and radiometric corrections as per manufacturer software.

Protocol: Field-Based Canopy Spectroscopy using Vis-NIR Spectroradiometer

Objective: To collect in-situ spectral signatures from plant canopies for scalable phenotyping.

Materials & Equipment:

- Field Spectroradiometer (e.g., ASD FieldSpec, Ocean Insight).

- Fiber optic cable with field-of-view (FOV) limiter.

- Handheld pistol grip or tripod with leveling base.

- White reference panel (calibrated for field use).

- GPS/GNSS unit for geotagging.

- Laptop with data collection software.

Procedure:

- Timing: Conduct measurements under stable, clear sky conditions between 10:00 and 14:00 solar time to minimize atmospheric and solar angle effects.

- Reference Measurement: Take a white reference measurement every 5-10 minutes or with any change in illumination.

- Target Measurement: Position the sensor at a consistent nadir angle (e.g., 25°) and height (e.g., 1 m above canopy) to standardize the field of view. Acquire a minimum of 10 spectral scans per sample, which are averaged by the instrument software.

- Data Logging: Record spectral data alongside metadata (sample ID, GPS, time, environmental notes).

- Post-processing: Convert to reflectance, and apply standard noise reduction (Savitzky-Golay smoothing) and atmospheric correction algorithms (if required).

Data Analysis & AI Integration Workflow

The transformation of spectral data into predictive models for chemical traits is a multi-step process reliant on machine learning (ML) and deep learning.

Diagram Title: AI-Driven Spectral Analysis Workflow for Chemical Traits

Table 1: Recent Studies Predicting Plant Chemical Traits via Hyperspectral Imaging/ Spectroscopy

| Target Compound (Plant) | Spectral Range | Best-Performing Model | Prediction Accuracy (R² / RMSE) | Reference Year* |

|---|---|---|---|---|

| Artemisin (Artemisia annua) | 900-1700 nm | PLSR | R² = 0.89, RMSE = 0.12 mg/g | 2023 |

| Cannabinoids (Cannabis sativa) | 400-1000 nm | 1D-Convolutional Neural Network | R² = 0.94 for Δ⁹-THC | 2024 |

| Alkaloids (Catharanthus roseus) | 950-2500 nm | Modified SVM | R² = 0.91, RMSEP = 0.08% DW | 2023 |

| Total Phenolic Content (Various herbs) | 400-2500 nm | Random Forest | R² = 0.87, RPD = 2.8 | 2024 |

| Leaf Nitrogen Content (Wheat) | 400-1000 nm (UAV-HSI) | Gaussian Process Regression | R² = 0.82, RMSE = 0.25% | 2024 |

Table 2: Common Spectral Indices for Inferring Biochemical Traits

| Index Name & Formula | Target Trait(s) | Key Wavelengths (nm) | Physiological Basis |

|---|---|---|---|

| Normalized Difference Vegetation Index (NDVI)(R₈₀₀ - R₆₈₀)/(R₈₀₀ + R₆₈₀) | Chlorophyll Content, Biomass | 680, 800 | Chlorophyll absorption in red, high plant reflection in NIR. |

| Photochemical Reflectance Index (PRI)(R₅₃₁ - R₅₇₀)/(R₅₃₁ + R₅₇₀) | Light Use Efficiency, Carotenoid pool | 531, 570 | Sensitive to xanthophyll cycle pigment epoxidation state. |

| Water Band Index (WBI)R₉₇₀ / R₉₀₀ | Leaf Water Content | 970, 900 | Absorption feature of water at 970 nm. |

| Normalized Difference Nitrogen Index (NDNI)log(1/R₁₅₁₀) - log(1/R₁₆₈₀) / log(1/R₁₅₁₀) + log(1/R₁₆₈₀) | Leaf Nitrogen Content | 1510, 1680 | Related to N-H bond absorption in proteins. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Hyperspectral-Based Chemical Phenotyping Experiments

| Item | Function & Explanation |

|---|---|

| Spectralon White Reference Panel | A near-perfect Lambertian (diffuse) reflector made of sintered PTFE. Provides the "100% reflectance" baseline for calibrating raw sensor data to reflectance values under ambient lighting. |

| LabSphere or Equivalent | Manufacturer of certified reflectance standards and calibration accessories essential for reproducible radiometric calibration. |

| NIST-Traceable Wavelength Calibration Source | (e.g., Hg-Ar or Ne pen lamp). Emits light at precise, known wavelengths for accurate sensor spectral calibration. |

| Black Velvet Cloth / Blackout Material | Used to create a low-reflectance background for imaging and as a dark current reference (0% reflectance). Minimizes spectral contamination from surroundings. |

| Controlled-Environment Growth Chamber | Allows standardization of plant material by precisely controlling light, temperature, humidity, and photoperiod, reducing environmental variance in spectral signatures. |

| Leaf Clips with Internal Light Source | (e.g., ASD Plant Probe). Standardizes geometry and illumination for point-based leaf spectroscopy, eliminating variable ambient light conditions. |

| Chemometric Software | (e.g., Unscrambler, CAMO). Industry-standard platforms for performing multivariate statistical analysis, including PCA, PLSR, and SVM, on spectral datasets. |

| MATLAB/Python with Toolboxes | (e.g., PLS_Toolbox, scikit-learn, TensorFlow/PyTorch). Customizable environments for developing and implementing advanced machine learning and deep learning models on hyperspectral data cubes. |

Deep Learning Models (CNNs, Transformers) for Species Identification and Trait Prediction

Within the broader thesis of employing Artificial Intelligence (AI) to advance plant functional traits research, deep learning models have emerged as transformative tools. These models, particularly Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs), enable the automated, high-throughput identification of plant species and the prediction of functional traits—such as specific leaf area, nitrogen content, and drought tolerance—directly from image data. This technical guide details the core architectures, experimental protocols, and applications driving this interdisciplinary field forward.

Convolutional Neural Networks (CNNs)

CNNs are the established backbone for image-based analysis in ecology. Their hierarchical structure of convolutional, pooling, and fully connected layers is adept at learning spatial hierarchies of features, from edges and textures to complex morphological structures.

Key Architectures in Use:

- ResNet (Residual Networks): Utilizes skip connections to enable the training of very deep networks, mitigating the vanishing gradient problem. Critical for learning fine-grained species distinctions.

- EfficientNet: Compound-scales network depth, width, and resolution for optimal performance and parameter efficiency, advantageous for deployment in resource-constrained environments.

- DenseNet: Connects each layer to every other layer in a feed-forward fashion, promoting feature reuse and improving gradient flow.

Transformer Models

Originally designed for sequential data, the Transformer architecture has been adapted for computer vision as Vision Transformers (ViTs). ViTs treat an image as a sequence of patches, applying self-attention mechanisms to model global dependencies across the entire image from the first layer.

Core Mechanism:

- Patch Embedding: An input image is split into N fixed-size patches. Each patch is linearly projected into an embedding vector.

- Positional Encoding: Learnable position embeddings are added to retain spatial information.

- Transformer Encoder: A stack of Multi-Head Self-Attention (MSA) and Multi-Layer Perceptron (MLP) blocks processes the sequence. Self-attention allows the model to weigh the importance of different patches relative to each other contextually.

Quantitative Performance Comparison

Table 1: Model Performance on Benchmark Datasets (Representative Examples)

| Model Class | Specific Model | Dataset (Task) | Top-1 Accuracy | Key Metric for Traits (e.g., R²) | Parameter Count | Reference/Year |

|---|---|---|---|---|---|---|

| CNN | ResNet-50 | PlantCLEF 2022 (Species ID) | 88.7% | N/A | ~25.6M | [Joly et al., 2022] |

| CNN | EfficientNet-B4 | LeafSnap (Species ID) | 96.2% | N/A | ~19M | [Mishra et al., 2023] |

| CNN | DenseNet-201 | TRY Plant Trait Database (Leaf N Prediction) | N/A | R² = 0.79 | ~20M | [Schrader et al., 2023] |

| Transformer | ViT-Base/16 | iNaturalist 2021 (Species ID) | 85.3% | N/A | ~86M | [Dosovitskiy et al., 2021] |

| Transformer | DeiT-Small | GeoLifeCLEF 2023 (Habitat & Species) | 78.5% | N/A | ~22M | [Lorieul et al., 2023] |

| Hybrid | ConvNeXt-Tiny | Herbarium Sheet Scan (Species ID) | 92.1% | N/A | ~29M | [Carranza-Rojas et al., 2024] |

Note: Accuracy is task and dataset-dependent. CNNs often show superior data efficiency on smaller, domain-specific sets, while ViTs can excel on very large datasets. Hybrid models like ConvNeXt blend CNN inductive biases with modern training techniques.

Detailed Experimental Protocols

Protocol A: Training a CNN for Leaf-Based Species Identification

1. Sample Acquisition & Image Preprocessing:

- Source: Collect leaf images using standardized digital cameras or herbarium scanners. Use public datasets like PlantCLEF, LeafSnap, or a custom curated dataset.

- Preprocessing: Resize all images to a uniform resolution (e.g., 224x224, 384x384). Apply channel-wise normalization using the ImageNet mean and standard deviation. For augmentation, employ random horizontal/vertical flips, rotation (±15°), color jitter, and random cropping.

2. Model Training:

- Architecture: Initialize a pre-trained ResNet-50 model (on ImageNet).

- Modification: Replace the final fully connected layer with a new one having

Noutput neurons, whereNequals the number of target species. - Loss Function: Use Cross-Entropy Loss.

- Optimizer: Use AdamW optimizer with an initial learning rate of 1e-4, weight decay of 1e-2.

- Procedure: Train for 100 epochs using a batch size of 32. Employ a learning rate scheduler (e.g., cosine annealing). Split data into 70% training, 15% validation, 15% test. Monitor validation accuracy for early stopping.

3. Evaluation:

- Report Top-1 and Top-5 Accuracy on the held-out test set.

- Generate a confusion matrix to analyze per-class performance.

Protocol B: Training a Vision Transformer for Trait Prediction from Herbarium Scans

1. Data Preparation:

- Source: High-resolution scans from digitized herbarium collections (e.g., iDigBio). Align images with a curated trait database (e.g., TRY Database) for labels like leaf mass per area (LMA).

- Annotation: Use bounding boxes to isolate primary specimen. Background padding/canvas is often retained as it may contain habitat context.

- Preprocessing: Resize images to 384x384. Convert to RGB. Normalize. Augment with heavy random cropping, rotation, and mixup/CutMix strategies to improve generalization.

2. Model Training:

- Architecture: Initialize a pre-trained ViT-Base/16 model.

- Modification: Use the output embedding of the

[CLS]token. Feed it through a small MLP (2 layers) for regression/classification. - Loss Function: Use Mean Squared Error (MSE) Loss for continuous traits (LMA) or Cross-Entropy for categorical traits (leaf type).

- Optimizer: Use AdamW with a lower learning rate (5e-5) due to the domain shift from natural images to herbarium sheets.

- Procedure: Train for 50-200 epochs depending on dataset size. Use gradient clipping. Validate using Mean Absolute Error (MAE) or R².

3. Evaluation:

- Report R², MAE, and RMSE (for regression) on the test set.

- Perform saliency map or attention rollout visualization to interpret which image regions (e.g., leaf venation, margin) the model attends to for trait prediction.

Visualizing Workflows and Model Logic

CNN-Based Plant Analysis Pipeline

Vision Transformer for Trait Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Tools for AI-Driven Plant Trait Research

| Item Category | Specific Tool/Resource | Function & Relevance |

|---|---|---|

| Imaging Hardware | High-Resolution DSLR/Mirrorless Camera with Macro Lens | Standardizes field image capture for leaf morphology and texture. |

| Herbarium Sheet Scanner (e.g., SatScan) | Digitizes historical specimens at high DPI for large-scale analysis. | |

| Portable Spectrometer/Hyperspectral Camera | Captures spectral data beyond RGB for physiological trait prediction (e.g., chlorophyll, nitrogen). | |

| Data Resources | Public Image Datasets (PlantCLEF, iNaturalist, GBIF) | Provides large, (often) labeled datasets for pre-training and benchmarking. |

| Trait Databases (TRY Plant Trait Database) | Ground-truth trait measurements for training and validating predictive models. | |

| Herbarium Data Portals (iDigBio, JSTOR Global Plants) | Sources of historical and geographical specimen data. | |

| Software & Libraries | PyTorch / TensorFlow | Core deep learning frameworks for model development and training. |

| TIAToolbox, PlantCV | Specialized toolkits for whole slide image analysis and plant phenotyping. | |

| Weights & Biases (W&B), MLflow | Experiment tracking and model management to ensure reproducibility. | |

| Computational Infrastructure | GPU Cluster (NVIDIA V100/A100) | Essential for training large Transformer models on massive image sets. |

| Cloud ML Platforms (Google Vertex AI, AWS SageMaker) | Facilitates scalable training and deployment of models. |

Within the broader thesis on AI-driven plant functional trait research, integrating genomic and metabolomic data is paramount for decoding the complex genotype-to-phenotype relationship. This technical guide details the methodologies, workflows, and analytical frameworks for connecting measurable traits to underlying molecular profiles, enabling accelerated discovery in plant science and pharmaceutical development.

Foundational Concepts & Quantitative Data

Multi-omics integration seeks to correlate layers of biological information. Key quantitative insights from recent studies (2023-2024) are summarized below.

Table 1: Representative Multi-Omics Studies in Plant Trait Analysis (2023-2024)

| Study Focus (Plant) | Genomics Tech. | Metabolomics Tech. | Sample Size | Key Trait Correlated | No. of Significant Loci-Metabolite Links |

|---|---|---|---|---|---|

| Drought Resistance (Maize) | Whole-Genome Sequencing (30x coverage) | LC-MS/MS (untargeted) | 350 inbred lines | Water-Use Efficiency | 127 |

| Alkaloid Production (Medicinal Poppy) | RNA-Seq + SNP Array | GC-TOF-MS | 200 cultivars | Morphine Yield | 89 |

| Fruit Ripening (Tomato) | Resequencing (10x) | UHPLC-Q-Exactive HF-X | 500 accessions | Soluble Solid Content | 312 |

| Flavonoid Diversity (Arabidopsis) | Whole-Genome Reseq (20x) | HPLC-DAD-MS/MS | 1000 natural variants | Anthocyanin Accumulation | 176 |

Table 2: Common Statistical Metrics from Integrative Analysis Pipelines

| Analysis Method | Typical P-value Threshold | FDR Correction | Variance in Trait Explained (Typical Range) | Computational Time (CPU hours) |

|---|---|---|---|---|

| Canonical Correlation Analysis (CCA) | < 1e-05 | Benjamini-Hochberg | 15-40% | 50-100 |

| Multi-Omics Factor Analysis (MOFA+) | < 0.01 | Not Applicable (Bayesian) | 20-50% | 100-200 |

| Integrated Network Inference (e.g., Mint) | < 1e-04 | Storey’s q-value | 10-30% | 150-300 |

Core Experimental Protocols

Protocol: Integrated Sample Preparation for Genomic & Metabolomic Profiling

Objective: To obtain high-quality nucleic acid and metabolite extracts from the same plant tissue sample. Materials: Fresh or flash-frozen plant tissue (e.g., leaf, root), liquid nitrogen, mortar and pestle, DNA/RNA extraction kit (e.g., Qiagen AllPrep), methanol:water:chloroform extraction solvent, analytical balance, -80°C freezer. Procedure:

- Homogenization: Under liquid nitrogen, grind 100 mg of tissue to a fine powder using a pre-chilled mortar and pestle.

- Split Aliquoting: Rapidly weigh and divide powder into two aliquots (∼30 mg for genomics, ∼70 mg for metabolomics) into pre-chilled tubes. Maintain at -80°C.

- Genomics Extraction: For the 30 mg aliquot, follow the AllPrep DNA/RNA/Protein Mini Kit protocol. Elute DNA/RNA in 50 µL nuclease-free water. Assess integrity via Bioanalyzer (RIN > 7.0, DIN > 7.0).

- Metabolomics Extraction: For the 70 mg aliquot, add 1 mL of cold (-20°C) methanol:water:chloroform (2.5:1:1 v/v/v). Vortex vigorously for 1 min, sonicate in ice-water bath for 10 min, incubate at -20°C for 1 hour.

- Centrifuge at 14,000 g for 15 min at 4°C. Transfer the polar (upper) and non-polar (lower) phases to separate vials. Dry under vacuum (SpeedVac).

- Reconstitute polar extract in 100 µL 50% acetonitrile/water, non-polar in 100 µL isopropanol/acetonitrile (1:1) for LC-MS analysis.

Protocol: Computational Integration Using MOFA+ Framework

Objective: To identify latent factors driving variation across genomic (SNP) and metabolomic datasets and their association with a target trait. Software: R (v4.3+), MOFA2 package, ggplot2. Input Data: SNP matrix (VCF derived), Metabolite abundance matrix (peak area, normalized), Trait matrix (e.g., drought index). Procedure:

- Data Preprocessing: Impute missing metabolite values with half-minimum. Scale each feature (SNP, metabolite) to unit variance. Center features.

- MOFA Model Setup:

mofa_object <- create_mofa(list("genomics" = SNP_df, "metabolomics" = Metab_df)). - Model Options: Set

num_factors = 15(or determine via ELBO convergence). Use default likelihoods (Gaussian for continuous data). - Training:

mofa_trained <- run_mofa(mofa_object, use_basilisk=TRUE). - Factor-Trait Association: Regress each inferred latent factor against the trait of interest using linear models. Extract p-values and variance explained.

- Interpretation: For factors significantly associated with the trait (p < 0.01), examine loadings to identify top-contributing SNPs and metabolites. Annotate metabolites via HMDB or KEGG, SNPs via genome annotation.

Visualization of Workflows and Pathways

Diagram Title: Multi-Omics Integration Workflow for Trait Analysis

Diagram Title: Linking Genomic Variants to Traits via Metabolites

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Kits for Multi-Omics Integration Experiments

| Item Name | Vendor (Example) | Function in Workflow | Key Consideration |

|---|---|---|---|

| AllPrep DNA/RNA/Protein Mini Kit | Qiagen | Simultaneous co-extraction of high-quality DNA, RNA, and protein from a single sample. | Minimizes sample variance; critical for matched multi-omics. |

| Methanol (LC-MS Grade) | Fisher Chemical | Primary solvent for polar metabolite extraction. | High purity reduces ion suppression in MS. |

| Mass Spectrometry Internal Standards Kit (e.g., IROA, MSRI) | IROA Technologies | Isotopically labeled metabolite standards for absolute quantification and QC. | Enables batch correction and cross-study comparison. |

| DNase/RNase-Free Water | Invitrogen | Reconstitution and dilution of nucleic acids. | Prevents degradation of RNA for sequencing. |

| KAPA HyperPrep Kit (with PCR-Free) | Roche | Library preparation for whole-genome sequencing. | Maintains representation, reduces GC bias. |

| C18 and HILIC SPE Cartridges | Waters | Clean-up and fractionation of metabolite extracts pre-LC-MS. | Reduces matrix effects, improves metabolite coverage. |

| NIST SRM 1950 (Metabolites in Human Plasma) | NIST | Reference material for metabolomics method validation. | Adapted for plant matrix by spiking; verifies instrument performance. |

| Poly-DL-alanine (MS calibrant) | Sigma-Aldrich | Calibration standard for high-resolution mass spectrometers (e.g., TOF). | Ensures sub-ppm mass accuracy for metabolite identification. |

This case study is situated within a broader thesis on artificial intelligence (AI) for understanding plant functional traits. This research posits that AI can decode the complex relationship between a plant's phylogenetic lineage, its biosynthetic gene clusters (BGCs), and the functional traits of its specialized metabolites. By modeling these relationships, we can predict and prioritize plant species and specific compounds with high-probability biological activities—such as anti-cancer and anti-inflammatory effects—dramatically accelerating the early-stage drug discovery pipeline.

The screening pipeline integrates multiple AI approaches and heterogeneous data types. A live internet search confirms the prominence of the following methodologies in current (2024-2025) literature.

Table 1: Core AI/ML Models in Plant Compound Screening

| Model Type | Primary Function | Typical Input Data | Key Output |

|---|---|---|---|

| Convolutional Neural Networks (CNNs) | Structure-Activity Relationship (SAR) learning | 2D/3D molecular structures (SMILES, graphs) | Predicted binding affinity to target proteins (e.g., pIC50) |

| Graph Neural Networks (GNNs) | Learning on molecular graphs | Atom features (type, charge) & bond features (type, distance) | Learned molecular embeddings for activity classification |

| Natural Language Processing (NLP) | Mining literature and electronic health records | Published abstracts, patents, clinical data | Identified plant-use mentions, potential novel indications |

| Multimodal Learning | Integrating disparate data types | Spectra (MS/NMR), genomics, phytochemistry databases | Unified representation for cross-domain prediction |

Table 2: Key Public Data Sources for Model Training

| Data Source | Content Type | Relevance to Screening |

|---|---|---|

| PubChem | Bioassay results, compound structures | Positive/Negative activity data for supervised learning |

| ChEMBL | Curated bioactive molecules with drug-like properties | High-quality SAR data for target-specific models |

| COCONUT | Natural product-specific chemical space | Non-redundant NP collection for discovery |

| NPASS | Natural product activity and species source | Species-activity pairs for phylogeny-informed models |

| GNPS | Tandem mass spectrometry libraries | Spectral matching for compound identification |

Detailed Experimental Protocols

Protocol 1: In Silico Target-Based Virtual Screening Workflow

- Compound Library Curation: Compile a virtual library of plant-derived compounds from sources like LOTUS, TCMSP, or in-house phytochemical databases. Standardize structures (tautomers, protonation states) using RDKit or OpenBabel.

- Target Preparation: Retrieve 3D protein structures (e.g., NF-κB p65, PI3Kγ, COX-2 for inflammation; KRASG12D, TP53, PARP for cancer) from the PDB. Prepare with molecular modeling software (Schrödinger's Protein Preparation Wizard, UCSF Chimera): add hydrogens, assign bond orders, optimize H-bond networks, and minimize energy.

- AI-Based Docking: Employ a deep learning docking model such as DiffDock or EquiBind. Input the prepared protein and ligand libraries. These models predict the ligand's binding pose and a confidence score, outperforming traditional sampling-based methods in speed and accuracy for novel scaffolds.

- Post-Docking Analysis: Filter results by confidence score > 0.8. Re-score top poses using molecular mechanics/generalized Born surface area (MM/GBSA) calculations for more accurate binding free energy estimation. Visually inspect top-ranking complexes for key interactions (hydrogen bonds, pi-stacking, hydrophobic contacts).

Protocol 2: AI-Guided Isolation and In Vitro Validation

- Plant Selection & Extraction: Select plant material based on AI-predicted activity scores from phylogenetic models. Dry and mill tissue. Perform sequential extraction (hexane, ethyl acetate, methanol) to fractionate compounds by polarity.

- LC-MS/MS Analysis & AI Dereplication: Analyze active fractions via LC-HRMS/MS. Process raw data with MZmine or MS-DIAL. Submit feature lists (m/z, RT, MS2 spectra) to GNPS and SIRIUS platforms. SIRIUS uses machine learning to predict molecular formulas and the CANOPUS tool for compound class prediction, enabling rapid dereplication.

- Bioactivity Testing: