From Soil to Silicon: Implementing FAIR Data Principles for AI-Driven Plant Science and Biomedical Discovery

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on implementing FAIR (Findable, Accessible, Interoperable, Reusable) data principles to power artificial intelligence in plant science.

From Soil to Silicon: Implementing FAIR Data Principles for AI-Driven Plant Science and Biomedical Discovery

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on implementing FAIR (Findable, Accessible, Interoperable, Reusable) data principles to power artificial intelligence in plant science. It covers the foundational rationale for FAIR data, practical methodologies for its application in AI workflows, solutions to common implementation challenges, and frameworks for validating and benchmarking FAIR-compliant datasets. The guide bridges the gap between plant data generation and its effective use in machine learning models, aiming to accelerate discoveries in both agricultural science and downstream biomedical applications, including drug discovery.

Why FAIR Data is the Root of AI Success in Modern Plant Science

FAIR Technical Support Center

Welcome to the FAIR Data Support Center. This section addresses common technical and procedural issues researchers face when implementing FAIR principles for plant phenomics, genomics, and AI-driven analysis.

Troubleshooting Guides & FAQs

Q1: My plant phenotyping images are stored on a local server. They are "Findable" within my lab, but external AI researchers cannot discover them. What is the core issue? A: The issue is a lack of rich, standardized metadata registered in a public or institutional repository. "Findable" requires globally unique and persistent identifiers (PIDs) and metadata indexed in a searchable resource.

- Protocol: To make image datasets findable:

- Generate a Persistent Identifier (e.g., a DOI) for your dataset using your institutional repository or a service like Zenodo.

- Structure your metadata using a plant-specific schema (e.g., MIAPPE, the Minimum Information About a Plant Phenotyping Experiment).

- Deposit the metadata, along with the PID and a pointer to the data location, into a public catalog like Data Plant or e!DAL-PGP.

Q2: I have shared a genomic sequence dataset with a public accession number, but a collaborator's AI pipeline cannot access it programmatically (without manual login). How do I fix this? A: This violates the "Accessible" pillar. The data should be retrievable by their identifier using a standardized, open, and free protocol.

- Protocol: Ensure automated access is enabled:

- Verify the data repository supports standard communication protocols (e.g., HTTP, FTP).

- If authentication is necessary (e.g., for sensitive pre-publication data), provide a method for getting credentials (like OAuth tokens) that can be embedded in scripts. Ideally, share metadata openly, and specify access conditions clearly in the metadata.

- Test accessibility using a command-line tool like

curlorwgetwith the dataset's URI.

Q3: My metabolomics data is in a proprietary instrument format. How can I make it "Interoperable" with public plant biology knowledge graphs? A: Interoperability requires using shared, formal languages and vocabularies. Proprietary formats are a major barrier.

- Protocol: Convert and annotate your data:

- Convert Data: Export raw data to an open, non-proprietary format (e.g., mzML for mass spectrometry). Use tools like ProteoWizard's msConvert.

- Use Ontologies: Annotate your dataset using terms from plant science ontologies (e.g., Plant Ontology (PO), Plant Trait Ontology (TO), Chemical Entities of Biological Interest (ChEBI)).

- Link Identifiers: Where possible, use standard identifiers for samples (BioSample ID), genes (NCBI Gene ID), and compounds (PubChem CID) to link your data to external resources.

Q4: What specific information must be included to ensure my transcriptomics dataset is "Reusable" for a machine learning project? A: Reusability depends on rich, accurate context (metadata) and a clear license. The AI model needs to understand the data's origin and constraints.

- Protocol: Apply the "data provenance" and "license" criteria:

- Document the experimental design meticulously using the MIAPPE checklist.

- Describe the plant material (genotype, growth conditions, treatments) in detail.

- Specify the computational workflow (software, versions, parameters) used to process raw reads into gene expression counts.

- Attach a clear, permissive usage license (e.g., CCO, MIT) to the dataset and all metadata.

Table 1: Comparison of Major Repositories for Plant FAIR Data

| Repository | Primary Data Type | PID Assigned | Metadata Standard | Access Protocol | License Recommendation |

|---|---|---|---|---|---|

| European Nucleotide Archive (ENA) | Genomics, Sequences | Yes (Accession) | INSDC, MIxS | FTP/API | User-defined |

| NCBI BioProject/BioSample | Project & Sample Metadata | Yes (Accession) | NCBI Standards | HTTP/API | CC0 for metadata |

| Zenodo | Any (multidisciplinary) | Yes (DOI) | Generic, customizable | HTTP/API | Multiple choices |

| e!DAL-PGP | Plant Phenomics/Genomics | Yes (DOI, PGP-ID) | MIAPPE-compatible | HTTP/API | User-defined |

| Araport | Arabidopsis Genomics | Yes (Accession) | Jaiswal lab standards | HTTP/API | CC-BY for data |

Key Experimental Protocol: Submitting a FAIR Plant Phenomics Dataset

Objective: To publish a root architecture image dataset in compliance with FAIR principles for use in AI model training.

Methodology:

- Data Collection & Curation: Organize raw image files. Annotate with basic context (genotype, treatment, date) in a README file.

- Metadata Creation: Create a spreadsheet structured according to the MIAPPE v2.0 core checklist. Populate fields for Investigation, Study, Assay, and data file links.

- Vocabulary Annotation: Map descriptive terms (e.g., "root length", "Col-0 ecotype") to ontology IDs (PO:0020125, TO:0000227, EC:69017) using ontology lookup services.

- Repository Submission: Upload (a) the metadata spreadsheet and (b) the image files to a chosen repository (e.g., e!DAL-PGP). The repository will mint a Persistent Identifier (DOI).

- License Specification: Assign a Creative Commons Attribution 4.0 (CC-BY) license to allow reuse with attribution.

- Provenance Logging: Document the imaging platform, software, and analysis scripts in a machine-readable workflow language (e.g., CWL, Snakemake) and include it in the deposit.

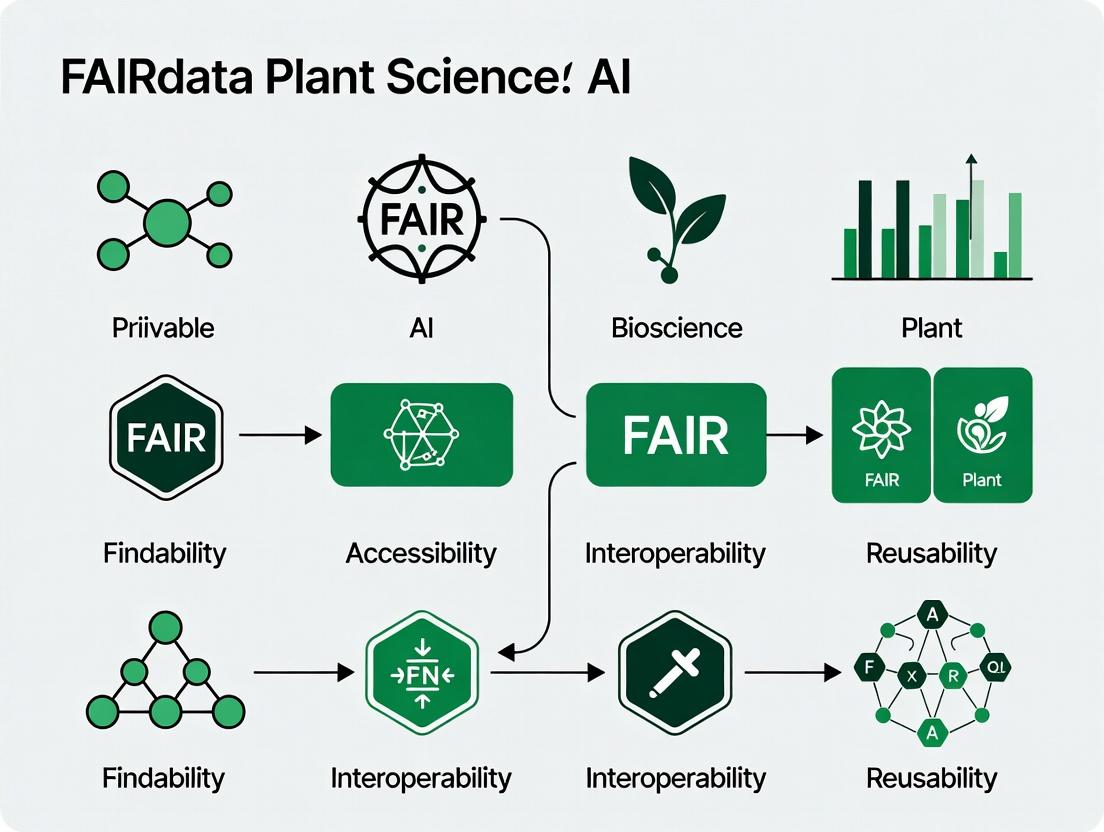

Visualizations

Diagram 1: FAIR Data Implementation Workflow for Plant Science

Diagram 2: FAIR Data Pillars and Technical Requirements

The Scientist's Toolkit: Research Reagent Solutions for FAIR Plant Data

Table 2: Essential Tools for Creating FAIR Plant Science Data

| Item | Category | Function in FAIRification |

|---|---|---|

| MIAPPE Checklist | Metadata Standard | Defines the minimum information required to make plant phenotyping data reusable. |

| Crop Ontology / Plant Ontology (PO) | Vocabulary | Provides standardized terms for describing plant structures and growth stages. |

| Plant Trait Ontology (TO) | Vocabulary | Provides standardized terms for describing measurable plant traits. |

| ISA-Tab Tools | Metadata Formatting | Framework for collecting investigation, study, and assay metadata in a structured format. |

| CyVerse Data Store | Repository Infrastructure | Provides scalable storage and computation, with PIDs, for plant science data. |

| Snakemake / Nextflow | Workflow Management | Records data provenance by encapsulating the entire analysis pipeline in executable code. |

| DataCite | PID Service | Issues Digital Object Identifiers (DOIs) for datasets, a key component of Findability. |

| FAIR-Checker Tools | Validation | Automated tools (e.g., F-UJI) to assess the FAIRness of a dataset against metrics. |

Technical Support Center

Troubleshooting Guides

Issue 1: Low Model Accuracy on Heterogeneous Datasets

- Symptoms: Model performance degrades when trained on data pooled from multiple labs or field trials. Validation accuracy is high on individual datasets but poor on cross-dataset tests.

- Diagnosis: This is typically caused by batch effects and inconsistent metadata annotation. The model is learning site-specific artifacts rather than generalizable biological features.

- Resolution:

- Apply computational harmonization techniques (e.g., ComBat, percentile normalization) before model training.

- Implement a stringent, controlled-vocabulary based metadata template (e.g., MIAPPE - Minimum Information About a Plant Phenotyping Experiment) for all data entry.

- Use domain adaptation or adversarial training methods within your ML architecture to force the model to learn invariant features.

Issue 2: Inability to Locate or Reuse Existing Datasets

- Symptoms: Spending excessive time searching for relevant public data. Datasets, when found, lack the necessary protocols or context to be usable.

- Diagnosis: Data is not Findable or Accessible due to poor repository choices, absent unique identifiers (DOIs), or non-standard keywords.

- Resolution:

- Deposit data in FAIR-compliant, domain-specific repositories (e.g., CyVerse Data Commons, EMBL-EBI's EBI BioStudies).

- Assign a persistent identifier (DOI) to every dataset.

- Use rich, standardized metadata with ontologies (e.g., Plant Ontology, Trait Ontology) in the dataset description.

Issue 3: Failed Reproduction of Published ML Analysis

- Symptoms: Code runs but produces different results or fails on a different computing environment.

- Diagnosis: Lack of Interoperability and Reusability due to missing code dependencies, unspecified software versions, or undocumented pre-processing steps.

- Resolution:

- Package the analysis in a container (e.g., Docker, Singularity).

- Use dependency management tools (e.g., conda environment.yaml, pip requirements.txt).

- Provide a complete, version-controlled computational workflow (e.g., using Nextflow, Snakemake) that documents every transformation step.

Frequently Asked Questions (FAQs)

Q1: We have legacy data from multiple phenotyping systems with different file formats. How can we make this interoperable for a unified analysis?

A: Create an Extract, Transform, Load (ETL) pipeline. Map all source data fields to a common data model (e.g., the ISA (Investigation-Study-Assay) framework). Convert images to a standard format (e.g., PNG/TIFF with consistent metadata embedding). Use a tool like Pandas for tabular data to enforce consistent column names and units.

Q2: What is the minimal metadata required to make my plant imaging dataset FAIR? A: At minimum, you must document:

- Biological Entity: Species, genotype, accession number.

- Growth Conditions: Medium, light (intensity, photoperiod), temperature, humidity.

- Experimental Design: Treatment, replicates, randomization scheme.

- Imaging Protocol: Sensor type, resolution, wavelength/band, camera settings.

- Data Provenance: Who generated it, when, and the raw data location.

Q3: Which file format is best for sharing annotated plant image datasets for ML? A: For large-scale projects, use COCO (Common Objects in Context) format. It is the industry standard for object detection tasks, supporting polygon annotations for leaves, roots, pests, etc. For simpler classification tasks, a structured directory tree with a CSV manifest file linking image filenames to labels is sufficient.

Q4: How do we handle inconsistent trait naming (e.g., "plantheight" vs. "canopyheight") across datasets?

A: Map all trait names to terms from a public ontology. Use the Plant Trait Ontology (TO) and Crop Ontology. For example, both names should map to the URI for TO:0000207 (plant height). This creates semantic interoperability, allowing machines to understand that the terms are equivalent.

Table 1: Impact of Data Silos on Model Generalizability

| Study Focus | # of Source Datasets | Accuracy Within Dataset | Cross-Dataset Accuracy (No Harmonization) | Cross-Dataset Accuracy (With FAIR Harmonization) |

|---|---|---|---|---|

| Leaf Disease Classification | 5 (public repositories) | 94-98% | 62-71% | 89-92% |

| Root Architecture Phenotyping | 3 (different labs) | 88-95% | 58% | 85% |

| Drought Stress Prediction | 4 (field trials) | 91% | 65% | 87% |

Table 2: Time Cost of Non-FAIR Data Practices

| Task | Time with Ad-Hoc Data (Hours) | Time with FAIR-Aligned Data (Hours) | Efficiency Gain |

|---|---|---|---|

| Data discovery & acquisition for literature review | 40-60 | 5-10 | ~80% |

| Data cleaning & unification for meta-analysis | 120+ | 20-40 | ~80% |

| Reproducing a peer's computational analysis | 80+ | < 8 | ~90% |

Experimental Protocol: Creating a FAIR Plant Image Dataset for ML

Objective: To generate a reusable, annotated image dataset of Arabidopsis thaliana under nutrient stress for training a convolutional neural network (CNN).

Materials: (See Scientist's Toolkit below)

Methodology:

- Experimental Design & Metadata Template:

- Define the study using the ISA framework. Create a digital metadata worksheet compliant with the MIAPPE v2.0 checklist.

- Pre-register the study design in a repository like the Open Science Framework (OSF).

Image Acquisition:

- Grow A. thaliana (Col-0 and mutant lines) under controlled conditions (+/- phosphate).

- Capture RGB top-view images daily at a fixed time using a standardized imaging box. Embed key metadata (timestamp, genotype, treatment) into the image file header using EXIF tags.

- Name files using a consistent scheme:

[Species]_[Genotype]_[Treatment]_[Replicate]_[Date].tif.

Image Annotation:

- Use LabelImg or CVAT annotation tool.

- Annotate objects (rosettes, yellow leaves) using bounding boxes or polygons.

- Export annotations in COCO JSON format. Link each annotation to ontology terms (e.g.,

PO:0000003for whole plant,PATO:0000321for yellow color).

Data Publication:

- Store raw images, annotation files, and metadata worksheet in a structured directory.

- Create a

README.mdfile detailing the project, file structure, and licensing. - Upload the entire dataset to a FAIR repository (e.g., CyVerse), which will mint a DOI.

- Share the code for analysis in a public Git repository (e.g., GitHub, GitLab) with an open-source license, linking to the dataset DOI.

Visualizations

Diagram 1: FAIR Data Workflow for Plant AI

Diagram 2: The Plant AI Data Bottleneck

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Example Product/Standard |

|---|---|---|

| Controlled Environment System | Provides standardized growth conditions to minimize non-genetic variance, essential for reproducible phenomics. | Percival growth chamber, Conviron walk-in room. |

| Standardized Imaging Setup | Ensures consistent lighting, angle, and resolution for image-based phenotyping, critical for ML. | LemnaTec Scanalyzer, DIY imaging box with calibrated LEDs. |

| Metadata Management Software | Tools to create and manage FAIR-compliant experimental metadata. | ISAcreator, BRC Metadatabase. |

| Ontology Lookup Service | Provides standardized terms for traits, experimental variables, and anatomical parts. | Planteome Browser, Ontology Lookup Service (OLS). |

| Data Harmonization Tool | Computational package to correct for batch effects across datasets. | sva R package (ComBat), scikit-learn transformers. |

| Containerization Platform | Packages code, dependencies, and environment to ensure computational reproducibility. | Docker, Singularity/Apptainer. |

| FAIR Data Repository | Public repository that assigns DOIs and supports rich metadata for long-term data preservation. | CyVerse Data Commons, EMBL-EBI BioImage Archive. |

Technical Support Center: Troubleshooting Guides & FAQs

FAIR Data Curation & Management

Q1: Our genomic variant calling pipeline produces VCF files, but we struggle to make them Findable and Interoperable. What are the minimum metadata requirements for submission to a public repository?

A: For submission to repositories like the European Variation Archive (EVA) or NCBI's dbSNP, you must provide essential contextual metadata. The following table summarizes the required fields:

| Metadata Field | Description | Example/Format |

|---|---|---|

| Study Type | The design of the study. | Control Set, Genetic variation |

| Project Name | A unique identifier for your project. | TomatoPanGenome2024 |

| Sample Information | Per sample: alias, taxonomy ID, sex, organism. | Solanum lycopersicum (taxid:4081) |

| Assay Information | Sequencing technology, library layout, library source. | ILLUMINA, PAIRED, GENOMIC |

| Analysis Files | Processed data file types (VCF, BAM). | VCF v4.3 |

| Reference Genome | Used for alignment & variant calling. | SL4.0 (GCA_000188115.5) |

Protocol for VCF FAIRification:

- Validate: Use

bcftools statsorvcf-validatorto ensure file integrity. - Annotate: Add functional consequences using SnpEff with the correct genome database (e.g.,

SnpEff -v Solanum_lycopersicum). - Generate Metadata: Create a structured

README.txtordata_dictionary.jsonfile compliant with MIAPPE (Minimum Information About a Plant Phenotyping Experiment) and DwC (Darwin Core) standards. - Persistent Identifier: Obtain a DOI for your dataset from a repository like Zenodo, CyVerse, or EVA upon submission.

Q2: When integrating transcriptomic (RNA-seq) data from multiple public studies for meta-analysis, expression values are not comparable. How do we standardize them?

A: The primary issues are normalization methods and batch effects. Follow this protocol for interoperability:

Protocol for RNA-seq Data Integration:

- Data Acquisition: Download raw FASTQ or processed count matrices from repositories like ArrayExpress or SRA. Always prefer raw reads.

- Uniform Reprocessing: Re-process all raw FASTQ files through the same pipeline.

- Quality Control:

FastQCandMultiQC. - Alignment: Use a splice-aware aligner (e.g.,

STAR) against a common reference genome. - Quantification: Generate read counts per gene using

featureCounts(from Subread package) with a common gene annotation file (GTF).

- Quality Control:

- Normalization & Batch Correction: Use R/Bioconductor packages.

- Load all count matrices into a

DESeq2DESeqDataSetobject. Perform median-of-ratios normalization (DESeq2::estimateSizeFactors). - For removing study-specific batch effects, use

ComBat-seq(for counts) orsvaon variance-stabilized transformed data.

- Load all count matrices into a

Q3: High-throughput plant phenomics images from different controlled-environment chambers have inconsistent lighting, causing erroneous trait extraction. How do we correct this?

A: Implement a computational image normalization workflow. Essential tools include PlantCV and OpenCV.

Protocol for Phenomics Image Normalization:

- Include Color Reference: In every imaging session, place a standard color calibration chart (e.g., X-Rite ColorChecker) in the field of view.

- Pre-processing with PlantCV:

- Correct Non-uniform Illumination: Use background subtraction (

plantcv.transform.correct_illumination). - Color Correction: Extract the ColorChecker region. Calculate a color transformation matrix to the standard chart values (

plantcv.transform.correct_color). - Apply Correction: Apply the matrix to all images from that session.

- Correct Non-uniform Illumination: Use background subtraction (

- Trait Extraction: Proceed with segmentation and trait analysis (area, height, color indices) on normalized images.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Example/Application |

|---|---|---|

| Standard ColorChecker Chart | Provides a consistent color reference for calibrating imaging systems across different devices, times, and lighting conditions. | Phenomics image normalization for accurate RGB-based stress detection. |

| Universal DNA/RNA Extraction Kit (Magnetic Bead-based) | High-quality, consistent nucleic acid isolation from diverse plant tissues (leaf, root, seed) for downstream sequencing. | Preparing genomic DNA for WGS or RNA for transcriptomics across a population. |

| Indexed Adapter Kits (PCR-Free) | Unique molecular barcodes (indexes) for multiplexing samples in a single sequencing lane, reducing batch effects. | Preparing whole-genome sequencing libraries for hundreds of plant samples. |

| Stable Isotope-Labeled Internal Standards | Quantified chemical standards used as spikes in samples for absolute quantification in metabolomics. | LC-MS/MS analysis for phytohormones (e.g., labeled ABA, JA) ensuring data interoperability. |

| Common Reference Genotype Seed Stock | A genetically uniform plant line grown and measured alongside experimental lines as a biological control. | Normalizing phenotypic data for environmental variance across growth batches or facilities. |

Visualizations

Diagram Title: FAIR Plant Science Data Lifecycle

Diagram Title: RNA-seq Meta-Analysis Troubleshooting

Technical Support Center: Troubleshooting & FAQs

This support center provides guidance for researchers encountering issues when integrating plant science datasets with biomedical and pharmaceutical research workflows, operating within the FAIR (Findable, Accessible, Interoperable, Reusable) data framework.

FAQs & Troubleshooting Guides

Q1: I cannot find relevant plant metabolite datasets that use standardized identifiers compatible with human metabolic pathways.

- A: This is a common interoperability (the "I" in FAIR) challenge. Many plant databases use traditional or species-specific nomenclature.

- Solution: Utilize cross-referencing resources. Convert plant metabolite names to universal chemical identifiers.

- Protocol:

- Extract your list of plant compounds from your source (e.g., PlantCyc, KNApSAcK).

- Use a batch search on the PubChem database to find corresponding Canonical SMILES or InChIKeys.

- Map these identifiers to human pathway databases (e.g., KEGG, Reactome) using their respective ID mapping tools.

- For novel compounds, consider computational tools like NPASS for bioactivity prediction to generate preliminary links.

Q2: My orthology analysis linking plant and human genes yields too many false-positive functional associations.

- A: This often results from relying solely on sequence similarity without considering context.

- Solution: Implement a multi-evidence orthology pipeline.

- Protocol:

- Sequence Orthology: Use tools like OrthoFinder or InParanoid for initial clustering.

- Domain Architecture Check: Validate hits using Pfam or InterProScan to ensure conserved domains.

- Contextual Validation: Check if the gene's position in a conserved pathway or network (e.g., using Plant Reactome vs. Human Reactome) is syntenic.

- Expression Context: If data exists, compare expression patterns in stress/response modules (non-conserved patterns can indicate functional divergence).

Q3: How do I ensure my published plant dataset is "Reusable" for a drug discovery team with no botanical expertise?

- A: Reusability depends on rich, structured metadata and clear usage licenses.

- Solution: Adopt domain-agnostic metadata schemas.

- Protocol:

- Use a minimum information standard (e.g., MIAPPE for plant phenotyping) as a base.

- Augment with broad biomedical ontologies:

- Use NCBITaxon for organism names.

- Use ChEBI identifiers for chemicals.

- Use GO (Gene Ontology) for molecular functions/processes.

- Use Uberon for anatomical structures where applicable.

- Provide a clear, machine-readable data availability statement with a permanent identifier (e.g., DOI) and a license (e.g., CCO, MIT).

Q4: When building a cross-kingdom network, how do I handle missing data for key signaling components?

- A: Data incompleteness is a major hurdle. A multi-source inference strategy is required.

- Solution: Employ homology-based inference and literature mining.

- Protocol:

- Identify the "missing" protein or compound in your plant model.

- Perform a BLASTP search against the Arabidopsis thaliana or other reference genome to find potential homologs.

- Use STRING-db (which includes some plant data) to examine potential interaction partners of the homolog.

- Utilize text-mining tools (e.g., POLYSEARCH, PlantConnectome) to find published evidence for the suspected interaction.

Table 1: Core Databases for Linking Plant and Biomedical Data

| Database Name | Primary Domain | Key Identifier(s) Used | Direct Cross-Reference To | Use Case in Pharma Linkage |

|---|---|---|---|---|

| PlantCyc | Plant Metabolic Pathways | Enzyme Commission (EC), CAS | PubChem, KEGG | Discovery of plant biosynthetic enzymes for compound production |

| KNApSAcK Core | Plant Metabolites | InChIKey, SMILES | PubChem, ChEBI | Screening plant metabolites for bioactivity against human targets |

| PhytoMine (Phytozome) | Plant Genomics | Phytozome ID, Gene Symbol | Ensembl (via orthology), GO | Identifying plant orthologs of human disease genes |

| CMAUP | Plant-Based Therapeutics | PubChem CID, ZINC ID | PubChem, DrugBank | Repurposing plant compounds for drug discovery |

| Plant Reactome | Plant Signaling Pathways | Reactome ID, UniProt | Human Reactome | Comparative pathway analysis for conserved stress responses |

Experimental Protocol: Identifying Bioactive Plant Compounds via Target Prediction

Title: In Silico Screening of Plant Metabolites for Human Target Affinity

Objective: To computationally prioritize plant-derived compounds for experimental testing against a human protein target (e.g., TNF-alpha, a key inflammation marker).

Methodology:

- Compound Library Curation: Download a dataset of plant metabolites from CMAUP or KNApSAcK. Filter for drug-like properties (e.g., using Lipinski's Rule of Five) via RDKit or Open Babel.

- Target Preparation: Retrieve the 3D crystal structure of the human target protein (e.g., PDB ID: 2AZ5 for TNF-alpha) from the Protein Data Bank (PDB). Prepare the protein (remove water, add hydrogens, assign charges) using software like AutoDock Tools or UCSF Chimera.

- Molecular Docking: Perform virtual screening. Use docking software such as AutoDock Vina or GNINA. Set the search space (grid box) to encompass the target's known active site.

- Analysis & Prioritization: Rank compounds based on docking score (binding affinity in kcal/mol). Visually inspect the top 20-50 poses for key binding interactions (hydrogen bonds, hydrophobic contacts). Cross-reference top hits with known bioactivity data in PubChem BioAssay.

- Validation: Select top-ranked, novel compounds for in vitro assay (e.g., ELISA-based TNF-alpha inhibition assay).

Visualizations

Diagram 1: Workflow for FAIR Plant-Biomedical Data Integration

Diagram 2: Cross-Kingdom Signaling Pathway: Jasmonate & Inflammation Parallels

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Cross-Disciplinary Experiments

| Item / Resource | Category | Function in Cross-Disciplinary Research |

|---|---|---|

| UniProt ID Mapping Tool | Bioinformatics Tool | Maps plant protein IDs to human ortholog IDs and vice versa, enabling direct comparison. |

| PubChem Compound Database | Chemical Database | Central hub for finding plant compounds, their structures (SMILES), bioactivities, and links to biomedical literature. |

| ChEBI Ontology | Ontology | Provides standardized chemical nomenclature and classification, crucial for interoperable metadata. |

| RDKit | Cheminformatics Library | Used to compute molecular descriptors, screen for drug-likeness, and handle chemical data from plants. |

| AutoDock Vina | Molecular Docking Software | Predicts how plant-derived small molecules might bind to human protein targets. |

| Plant Metabolite Extract Library | Physical Reagent | A characterized collection of plant extracts or pure compounds for high-throughput screening against human cell assays. |

| OrthoFinder Software | Genomics Tool | Accurately infers orthogroups across plant and animal genomes, identifying evolutionarily related genes. |

| Reactome Pathway Database | Pathway Knowledgebase | Allows side-by-side comparison of plant and human pathways (e.g., immune response, stress signaling). |

Technical Support Center: FAIR Data for Plant Science AI

Troubleshooting Guides & FAQs

Q1: I have uploaded my plant phenotyping image dataset to a repository, but AI researchers report they cannot understand the data structure or parameters. How can I make my dataset more reusable? A: This is a common "R1.1" (Reusable - Meta(data) are released with a clear and accessible data usage license) and "R1.2" (Reusable - Meta(data) are associated with detailed provenance) issue. Follow this protocol:

- Create a structured README file using the template from the RDA's "Data Fabric" Interest Group. Include sections for "Collection Methodology," "Environmental Parameters," "Camera Specifications," and "Data Annotation Rules."

- Embed critical metadata in the file names or a companion manifest.csv. Use a controlled vocabulary (e.g., Plant Ontology terms) for traits like

leaf_areaorstem_height. - Register your dataset's schema with a GO-FAIR Implementation Network (IN) like "FAIR Digital Objects" to get a persistent identifier for the data structure itself.

Q2: My institution's repository is not machine-actionable. How can I enable automated discovery and access for my genomic datasets as per the FAIR principles? A: This relates to "A1.1" (Accessible - Protocol is open, free, and universally implementable) and "I1" (Interoperable - Vocabularies are FAIR). Implement the following:

- Expose metadata via a standard API. Deploy a RDA-endorsed API specification like DCAT-2 or Schema.org. Your repository should return standardized JSON-LD when queried.

- Use persistent identifiers (PIDs) for samples (e.g., IGSN), genes (e.g., ENSEMBL IDs), and publications (DOIs). Link them in your metadata.

- Adopt a plant-specific metadata standard like MIAPPE (Minimum Information About a Plant Phenotyping Experiment), which is now aligned with the FAIRsharing.org registry promoted by GO-FAIR and RDA.

Q3: When integrating data from multiple plant studies for AI training, I encounter incompatible formats for "drought stress score." How do I resolve this? A: This is an "I2" (Interoperable - Vocabularies and ontologies are shared) challenge.

- Map to a common ontology. Do not create your own scale. Map all local scores to the Plant Stress Ontology (PSO) term

PSO:0000001(drought stress) and use associated quantitative measurement ontology (PATO) terms for severity. - Implement a conversion service. Provide a small script or lookup table as part of your data publication that maps your internal values to the standard terms.

- Consult the RDA "Wheat Data Interoperability" Working Group outputs, which provide concrete models for trait data harmonization.

Q4: The AI model I built works on my lab's data but fails on publicly available datasets. What metadata did I miss in documenting my training data? A: This likely stems from incomplete "R1.3" (Reusable - Meta(data) meet domain-relevant community standards) compliance. Your experimental protocol documentation must include:

- Data Preprocessing Pipeline: Document exact steps (e.g., "images were normalized using the

Keras.application.resnet.preprocess_inputfunction"). - Lab-specific Conditions: Detail growth chamber light spectra (in nm), soil composition, and watering regimens. Reference environmental ontologies (ENVO).

- Model Bias Statement: Explicitly state the species, genotypes, and conditions your training data represents, acknowledging the limitations for other plants.

Experimental Protocols for FAIR Data Curation

Protocol 1: Implementing FAIR Digital Objects for a Plant Phenomics Dataset

- Assign PIDs: Obtain a DOI for the dataset, IGSNs for plant samples, and ORCIDs for contributors.

- Structure Metadata: Create a metadata file in ISA-Tab format using the MIAPPE checklist. Validate it using the FAIR Cookbook tools (an RDA/GO-FAIR collaboration output).

- Choose a Repository: Select a repository certified by the CoreTrustSeal (advocated by RDA).

- Expose for Machines: Configure your repository endpoint to serialize metadata in JSON-LD using the Bioschemas profile (a GO-FAIR IN).

- Register in an Index: Register your dataset's PID and metadata type in the Data Type Registry IN of GO-FAIR.

Protocol 2: Cross-Study Data Harmonization for AI Training

- Identify Target Variables: Define the AI model's required input variables (e.g.,

biomass,flowering_time). - Gather Source Datasets: Retrieve datasets via their PIDs from repositories like EU Dataverse or e!DAL-PGP.

- Extract and Map Metadata: For each dataset, extract phenotype terms. Use the Crop Ontology and Planteome platform to map all terms to a common hierarchy.

- Create a Harmonized Data Matrix: Build a table where rows are observations, columns are harmonized variables, and cells contain standardized values (with units from QUDT ontology).

- Publish the Mapping: Publish the mapping logic (the "transformation recipe") as a separate, citable research object.

Visualizations

Title: FAIR Data Pipeline for Plant AI Research

Title: Role of Organizations in Plant FAIR Data Ecosystem

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in FAIR Plant Science |

|---|---|

| MIAPPE Checklist | The minimum metadata standard to describe a plant phenotyping experiment. Ensures Reusability (R1). |

| Crop Ontology (CO) / Planteome | Provides controlled, shared vocabularies for plant traits, growth stages, and experimental conditions. Ensures Interoperability (I2). |

| ISA-Tab Framework | A widely used file format to structure investigation, study, and assay metadata. Works with MIAPPE. |

| FAIRsharing.org Registry | A curated resource to discover and select appropriate standards, databases, and policies (collaborative output of RDA, GO-FAIR, others). |

| Data Type Registry (DTR) IN | A GO-FAIR initiative to register machine-readable data types, enabling automated interpretation of data structures. |

| e!DAL-PGP Repository | A plant-genomics focused repository designed to implement FAIR principles for seed and sequence data. |

| FAIR Cookbook | A hands-on, technical resource with "recipes" to implement FAIR, co-developed by RDA and GO-FAIR groups. |

A Step-by-Step Guide to Making Your Plant Data AI-Ready and FAIR-Compliant

FAQs & Troubleshooting Guide

Q1: I'm submitting genomic data for a tomato experiment to a public repository. Which ontologies do I need to annotate my samples with?

A: You will likely need to use a combination of ontologies to make your data FAIR. At a minimum, you should use:

- Plant Ontology (PO): To describe the plant structure (e.g.,

leaf,fruit) and development stage (e.g.,ripe fruit stage) sampled. - Plant Trait Ontology (TO): To describe the measured phenotypes (e.g.,

fruit mass,soluble solids content). - Environment Ontology (EO) or CHEBI: To describe treatments (e.g.,

drought stress,application of abscisic acid). - NCBI Taxonomy: To specify the organism (

Solanum lycopersicum). The repository may also require specific sample metadata schemas like MIAPPE or the EBI's checklists.

Q2: My collaborators and I keep using different terms for the same tissue (e.g., "seed," "kernel," "caryopsis"). How can we standardize this?

A: This is a common issue that ontologies are designed to solve. You should all agree to use the standardized term and identifier from the Plant Ontology (PO). In this case, for a mature maize seed, you would use PO:0009010 with the label "caryopsis." This ensures unambiguous data integration and searchability across datasets.

Q3: I found a plant phenotype ontology (TO) term, but it's too broad for my precise measurement. What should I do?

A: First, search the TO thoroughly to see if a more specific child term exists. If not, you have two options consistent with FAIR principles:

- Use the most specific existing term and add your precise method as a free-text comment in the

observation unitdescription. - Propose a new term to the TO consortium. This involves contacting the maintainers via their GitHub page with a clear definition, proposed parent term, and justification. This enriches the ontology for the entire community.

Q4: How do ontologies specifically benefit AI/ML model training in plant science?

A: Ontologies provide critical structure for both input features and output labels.

- Feature Engineering: They allow the aggregation of heterogeneous data (e.g., gene expression from "leaf" from multiple studies) into coherent training sets.

- Label Standardization: Models predicting traits or diseases can be trained on datasets unified by TO and PO terms, improving model generalizability and performance across different data sources.

- Knowledge Graphs: Ontologies form the backbone of knowledge graphs that can be used for hypothesis generation or to provide explainable context for model predictions.

Experimental Protocol: Annotating a Transcriptomics Dataset with Ontologies

Objective: To prepare a RNASeq dataset from drought-stressed Arabidopsis thaliana roots for submission to a public repository (e.g., ArrayExpress, GEO) in accordance with FAIR principles.

Materials:

- RNASeq data (FASTQ files, processed counts)

- Sample metadata spreadsheet

- Access to ontology browsers (OBO Foundry, Ontobee)

Methodology:

- Identify Mandatory Metadata: Consult the repository's submission guidelines (e.g., EBI's annotated ISA-Tab format) for required fields.

- Map Sample Descriptors to Ontology Terms:

- Organism: Use NCBI Taxonomy ID

3702(Arabidopsis thaliana). - Organ/Tissue: Use PO term

PO:0009008(root). Specify developmental stage with PO termPO:0007520(adult plant stage). - Experimental Condition/Treatment:

- Use Environment Ontology (EO) term

EO:0007403(drought stress). - For a chemical treatment, use CHEBI ID.

- Use Environment Ontology (EO) term

- Organism: Use NCBI Taxonomy ID

- Annotate Measured Variables: If submitting phenotypic data alongside transcriptomics, describe the trait using the TO (e.g.,

TO:0000366-root length). - Populate Metadata Template: Enter the ontology term IDs (e.g.,

PO:0009008) and their labels (e.g.,root) into the designated columns of the repository's template. - Validation: Use any validator provided by the repository to check that all term IDs are resolvable and correctly formatted.

Visualization

Ontology-Driven FAIR Data Workflow

Plant Ontology (PO) Hierarchical Structure

| Resource Name | Acronym | Primary Use Case | Access URL |

|---|---|---|---|

| Plant Ontology | PO | Describing plant anatomy & development stages. | planteome.org |

| Plant Trait Ontology | TO | Standardizing names & definitions of observable traits. | planteome.org |

| Chemical Entities of Biological Interest | CHEBI | Describing molecular entities, compounds, treatments. | ebi.ac.uk/chebi |

| Environment Ontology | EO | Describing environmental conditions, treatments, & exposures. | environmentontology.org |

| Minimum Information About a Plant Phenotyping Experiment | MIAPPE | The metadata checklist & data standard for plant phenotyping. | mippe.org |

The Scientist's Toolkit: Research Reagent Solutions for Ontology Annotation

| Item | Function in Metadata Annotation |

|---|---|

| Ontology Browser (e.g., Ontobee, OLS) | Web tool to search, browse, and find IDs for ontology terms. Essential for looking up correct PO, TO, CHEBI terms. |

| ISA-Tab Creator Tools (e.g., ISAcreator) | Desktop software to create and manage investigation/study/assay metadata files in the standardized ISA-Tab format, which supports ontology annotation. |

| Metadata Validation Service (e.g., EBI's Metabolights validator) | Online tool to check metadata files for compliance with repository rules and ontology term resolution before submission. |

| Controlled Vocabulary Manager (e.g., Curation Manager, ezCV) | Local or web-based systems to maintain and share project-specific lists of approved ontology terms among a research team. |

| FAIR Data Management Plan Template | A structured document template (e.g., from DMPTool) to pre-plan ontology usage, metadata standards, and repositories for a grant or project lifecycle. |

FAQs & Troubleshooting

Q1: I’ve uploaded my dataset to our institutional repository, but I only see a temporary URL. How do I get a proper DOI? A: Most repositories require you to finalize the submission and explicitly publish the record to mint a DOI. Ensure all mandatory metadata fields (creator, title, publisher, publication year, resource type) are completed. Look for a "Publish" or "Finalize" button. If the item is in "draft" or "review" state, the DOI will not be created.

Q2: My dataset contains multiple files, including raw sequencing data and processed results. Should I assign one DOI to the entire collection or separate DOIs to each component? A: Best practice for FAIR data is to assign a DOI to the collection as a whole to ensure citability of the entire research output. Use the repository's structure (e.g., a "collection" or "project" level) to group related files. Individual, significantly reusable components (e.g., a key sample manifest) can have separate PIDs if they are cited independently.

Q3: I received an "Invalid Checksum" error when trying to download a dataset via its ARK. What does this mean?

A: This error indicates the file stored at the ARK's target location has been altered or corrupted since its deposit, breaking the integrity promise of the PID. Contact the maintaining institution (the ARK's XXXX in ark:/XXXX/...) to report the issue. For your own data, ensure you use repository services that provide fixity checks (like SHA-256 hashing) upon upload.

Q4: How do I choose between a DOI and an ARK for my plant phenotyping images? A: The choice is often made by your repository or data center. DOIs are universally used for publication and citation, strongly supported by publishers. ARKs offer flexible resolution to metadata, data, or other states. For AI-ready datasets, if your platform uses ARKs for granular object management (e.g., individual images), use ARKs, but also consider minting a DOI for the versioned dataset release cited in papers.

Q5: Can I assign a PID to a physical plant sample? How is it linked to the digital data?

A: Yes, using a Persistent Identifier like an IGSN (International Geo Sample Number) or a custom URI. The physical sample's PID is recorded as a source or subject in the metadata of the digital dataset (e.g., genomic data). This creates a bidirectional link, making the data FAIR with respect to its provenance.

Q6: I need to correct metadata (e.g., a misspelled species name) after my DOI has gone live. Will this break the link? A: No, but you must follow proper versioning protocol. Do not delete the old record. Most DOI services allow you to create a new version of the record. The DOI will resolve to the latest version, but the prior version remains accessible via a separate timestamped identifier. The version relationship is maintained in the metadata, preserving citation integrity.

Q7: What is the typical cost and time required to obtain a DOI for a dataset? A: Costs and times are highly variable. See the table below for a comparison.

Table 1: PID Service Comparison for Plant Science Data

| Service Type | Example Providers | Typical Cost (Dataset) | Minting Time | Best For |

|---|---|---|---|---|

| Generalist Repository | Zenodo, Figshare | Free | Near-instant | General plant science datasets, project archives. |

| Discipline-Specific Repo | Phytozome, EBI-ENA, TreeBASE | Often free for academics; may have submission charges. | Hours to days | Genomic, phylogenetic data; enhances discoverability in field. |

| Institutional Repository | University library-based systems (e.g., DSpace) | Free for members; may have size quotas. | Days (may involve review) | Theses, long-term preservation of institutional research output. |

| Commercial DOI Registrar | DataCite via member organizations (e.g., CDL) | Variable; often ~$1-5 per DOI via an annual membership. | Near-instant | Large consortia or labs minting high volumes of PIDs. |

Experimental Protocol: Minting a DOI via Zenodo for a Plant Phenomics Dataset

Objective: To publish a dataset of annotated maize root system images in a FAIR manner by obtaining a persistent, citable DOI.

Materials & Reagent Solutions:

| Item | Function |

|---|---|

| Zenodo.org account | Platform for dataset deposition and DOI minting. |

| Dataset files | Compressed folder (.zip) containing image files (.tiff) and a README.txt with provenance. |

| Metadata spreadsheet | Pre-prepared .csv or .xlsx file with standardized column headers (e.g., species, treatment, date). |

| ORCID iD | Persistent identifier for the researcher, to link unambiguously to the dataset. |

| Checksum tool (e.g., md5sum) | To generate file integrity checksums for inclusion in metadata. |

Methodology:

- Prepare Dataset:

- Organize all image files and documentation. Create a comprehensive

README.txtdescribing the experiment, variables, file naming convention, and any licenses. - Generate a checksum for the final data package:

md5sum dataset_v1.zip > dataset_v1.zip.md5.

- Organize all image files and documentation. Create a comprehensive

- Log in & Initiate Upload:

- Log into Zenodo (link your ORCID for credibility).

- Click "Upload" and drag/drop your dataset

.zipfile and the.md5checksum file.

- Enter Metadata:

- Upload Type: Select "Dataset".

- Basic Info: Provide a descriptive title (e.g., "Maize root architecture under drought stress - Image set 2023"). Add all creators with affiliations and ORCIDs.

- Description: Use a structured abstract. Include: experimental plant lines, growth conditions, imaging technology, and data processing steps.

- Keywords: Add relevant terms (e.g., "Zea mays", "root phenotyping", "computer vision", "drought stress").

- Related & Funding Information: Link to grants (via FundRef) and any associated publications.

- Licensing: Select an open license (e.g., CC-BY 4.0) to define reuse rights.

- Access: Choose "Open" access.

- Publish & Mint DOI:

- Click "Publish". Zenodo will assign a DOI (e.g.,

10.5281/zenodo.1234567). - The DOI will be reserved immediately and become active (resolvable) within minutes.

- Click "Publish". Zenodo will assign a DOI (e.g.,

- Post-Minting:

- Download the generated

datacite.xmlmetadata file for your records. - Cite the dataset in your manuscript using the provided DOI citation text.

- Download the generated

Title: PID Assignment Workflow for Research Data

The Scientist's Toolkit: Essential Research Reagent Solutions for PID-Related Experiments

| Tool / Reagent | Function in PID & FAIR Data Context |

|---|---|

| DataCite Content Resolver | A service to resolve a DOI to its metadata in various formats (JSON, XML), crucial for machine readability. |

| FAIR-Checker Tools (e.g., F-UJI) | Automated tools to assess the FAIRness of a dataset based on its PID and metadata. |

| GitHub with Zenodo Integration | Enables versioned code to receive a DOI upon release, linking AI models to training data PIDs. |

| Sample ID Registry (e.g., IGSN) | Service to mint persistent unique identifiers for physical plant or soil samples. |

| Metadata Schema (e.g., MIAPPE, Darwin Core) | Standardized templates to structure metadata, making data linked to a PID fully interoperable. |

| OLS (Ontology Lookup Service) | Provides unique URIs for ontological terms (e.g., plant traits, diseases) to use in linked metadata. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: I am trying to submit my plant phenotyping image dataset to a public repository, but my submission was rejected due to "non-compliant metadata." What are the most common metadata standards I should use?

A: The rejection likely stems from missing required fields or using non-standard terms. Adherence to community-agreed standards is critical for FAIR interoperability.

- Primary Standard: Use MIAPPE (Minimum Information About a Plant Phenotyping Experiment). It is the cornerstone standard for describing plant phenotyping studies.

- Supporting Vocabularies:

- Plant Ontology (PO): Describe plant structures (e.g., "leaf," "root") and growth stages.

- Phenotype And Trait Ontology (PATO): Describe the measured qualities (e.g., "height," "chlorophyll content").

- Environment Ontology (EO): Describe environmental conditions (e.g., "drought stress," "high nitrogen treatment").

- Actionable Protocol: Re-annotate your dataset. For each image or data file, ensure your metadata includes, at minimum: a unique plant ID, species (using a term from NCBI Taxonomy), the observed plant structure (PO term), the measured trait (PATO term), the experimental condition (EO term), and the date of observation. Most repositories provide a MIAPPE-compliant template.

Q2: My AI model trained on gene expression data from one plant species performs poorly when tested on data from a related species. Could this be a data interoperability issue?

A: Yes, this is a classic interoperability challenge. The issue often lies in inconsistent gene identifiers and a lack of functional annotation mapping.

- Root Cause: Data from different species or even different studies often use different database identifiers (e.g., TAIR IDs for Arabidopsis, ZmIDs for maize) or generic labels (e.g., "WRKY transcription factor 1") that cannot be computationally mapped.

- Solution Protocol:

- Map to Orthologs: Use a tool like OrthoFinder to identify orthologous gene groups between your two species.

- Use Universal Identifiers: Map all gene IDs to a universal, database-agnostic system like GenBank Accession numbers or DOIs for datasets.

- Leverage Functional Annotations: Re-annotate both datasets using a common functional ontology like the Gene Ontology (GO). Training on GO term abundances rather than raw gene IDs can improve model transferability.

- Key Table: Common Gene Identifier Standards

| Species | Common Primary ID | Recommended Universal Bridge |

|---|---|---|

| Arabidopsis thaliana | TAIR Locus ID (e.g., AT1G01010) | Ensembl Plant Gene ID / GenBank Accession |

| Oryza sativa (Rice) | MSU LOC_Os ID / RAP-DB ID | GenBank Accession / IRGSP-1.0 Gene Symbol |

| Zea mays (Maize) | MaizeGDB Gene ID (e.g., Zm00001d000100) | GenBank Accession / RefGen_v4 Gene Model |

| Solanum lycopersicum (Tomato) | SGN ITAG ID (e.g., Solyc01g000100) | GenBank Accession |

Q3: When merging metabolomics datasets from different labs for my AI analysis, I get meaningless results. The compounds seem to be the same, but the data doesn't align. What went wrong?

A: This is frequently caused by a lack of standard reporting in metabolomics. Differences in compound identification confidence levels and measurement units render data non-interoperable.

- Problem Analysis: One lab may report a compound identified by an accurate mass and retention time match (Level 2 confidence), while another reports it as a structurally verified standard (Level 1). Merging these directly introduces error.

- Standardization Protocol:

- Adopt Reporting Standards: Ensure each dataset follows the Metabolomics Standards Initiative (MSI) reporting guidelines. Demand clear annotation levels for every compound.

- Use Chemical Ontologies: Map all compound names to identifiers from PubChem or ChEBI. Never rely on common names alone (e.g., use "CHEBI:18367" for "abscisic acid").

- Unit Standardization: Convert all intensity or concentration values to a common unit (e.g., μM per gram fresh weight) before analysis.

- Key Table: MSI Compound Identification Confidence Levels

| Level | Description | Example Identifier Strategy | Suitability for Merging |

|---|---|---|---|

| 1 | Confidently Identified | Verified by pure chemical standard (RT, MS/MS) | High – Can be directly merged. |

| 2 | Putatively Annotated | Characteristic MS/MS spectra or accurate mass + RT | Medium – Merge with caution, by compound class. |

| 3 | Putatively Characterized | Spectral match to a compound class (e.g., flavonoid) | Low – Merge only at the class level. |

| 4 | Unknown | Distinguished by mass and RT only | Not suitable for cross-study merging. |

The Scientist's Toolkit: Research Reagent Solutions for Interoperable Data Generation

| Item | Function in Standardization |

|---|---|

| MIAPPE Compliance Checklist | A structured form or digital tool to ensure all mandatory metadata fields are populated before experiment completion. |

| Controlled Vocabulary Spreadsheets | Pre-formatted lists of terms from PO, PATO, EO, and GO for copy-paste into experimental logs, ensuring term consistency. |

| Persistent Identifier (PID) Service | Use of services like DataCite or ePIC to mint Digital Object Identifiers (DOIs) for datasets, samples, and instruments. |

| Standard Reference Materials | Biological (e.g., control plant lines) or chemical (e.g., internal standard mixes for metabolomics) used to calibrate measurements across labs. |

| Metadata Harvester Software | Tools like BreedBASE or ISAcreator that capture experimental metadata in standardized formats (ISA-Tab) directly from researchers. |

Experimental Protocol: Conducting an Interoperable Plant Stress Phenotyping Experiment

Title: Standardized Workflow for Multi-Site Drought Phenotyping AI Readiness.

Objective: To generate a FAIR-compliant dataset of plant drought response suitable for federated AI analysis.

Methodology:

- Pre-Experiment Registration: Register the study in a global directory (e.g., FAIRsharing.org) using a persistent identifier.

- Standardized Growth Conditions: Document environment using EO terms. Use a common reference soil type and pot size. Apply drought stress defined by a specific soil water potential threshold (e.g., -0.5 MPa).

- Phenotyping with Controlled Vocabularies: Capture images daily. Annotate each image set with:

species(NCBI:txid3702),plant growth stage(PO:0001056 - vegetative phase),observed structure(PO:0009025 - leaf),measured trait(PATO:0000584 - area; PATO:0000324 - color). - Data Output Standardization: Store images in a standard format (e.g., PNG). Export extracted features (area, color indices) in a tabular CSV file with column headers mapped to ontology terms.

- Metadata Compilation: Populate a MIAPPE checklist. Link the metadata file to the data files using their unique filenames or PIDs.

- Repository Submission: Submit the data package (metadata + raw data + processed features) to a dedicated repository like e!DAL-PGP or CyVerse Data Commons, which enforces FAIR standards.

Visualization: FAIR Data Interoperability Workflow

Diagram Title: Workflow for Creating Interoperable FAIR Plant Science Data

Visualization: Gene Data Interoperability Challenge & Solution

Diagram Title: Solving Gene Data Interoperability for Cross-Species AI

FAQs & Troubleshooting Guides

Q1: What is the primary difference between a generalist and a plant-specific repository, and how do I choose? A: Generalist repositories accept data from any discipline, while plant-specific repositories are tailored with specialized metadata standards and ontologies for plant biology. Use the following table to guide your choice:

| Repository Type | Best For | Examples | Key Consideration |

|---|---|---|---|

| Generalist / Broad | Multidisciplinary projects, data linked to non-plant studies, or when no suitable domain repository exists. | Zenodo, Figshare, Dryad | Ensure they support community metadata standards (e.g., MIAPPE). |

| Plant-Specific | Most plant phenotyping, genomics, metabolomics data. Enforces domain standards for maximal interoperability. | e!DAL-PGP, PlantGenIE, PhytoMine | Check for required ontologies (e.g., Plant Ontology, Trait Ontology). |

| Omics-Specific | Large-scale sequence, expression, or metabolomic data. Often mandated by journals. | NCBI SRA, ENA, MetaboLights | Submission can be complex; plan for annotation time. |

| Model Organism | Data for species like Arabidopsis thaliana or Solanum lycopersicum. | Araport, Sol Genomics Network | Offers deep integration with species-specific tools and gene networks. |

Q2: I've uploaded my RNA-seq data to the Sequence Read Archive (SRA), but reviewers say it's not FAIR. What went wrong? A: Depositing raw data alone is insufficient. The issue is likely missing experimental metadata and processed data. Follow this protocol:

- Experimental Protocol:

- Prepare Raw Data: Upload FASTQ files to SRA via the Submission Portal. Link to the BioProject (e.g., PRJNA123456).

- Prepare Processed Data: Deposit processed files (e.g., normalized gene count matrix, differential expression results) in a complementary repository like Figshare or Zenodo. Use a stable format (e.g., .csv, .tsv).

- Create Comprehensive Metadata: Use the MIAPPE (Minimum Information About a Plant Phenotyping Experiment) standard to describe the study, growth conditions, and sampling protocols. For genomics, use MINSEQE.

- Link All Components: In the metadata for both deposits, use persistent identifiers (DOIs, BioProject ID) to cross-reference the raw data in SRA, the processed data in Figshare, and the associated publication.

Q3: How do I handle sensitive data, like the precise location of endangered plant species, while adhering to FAIR principles? A: FAIR does not mean "Open." You can use restricted access repositories. Choose a platform that allows embargoes and managed access.

- Troubleshooting Guide:

- Issue: Data contains sensitive Geographical Information (GPS coordinates).

- Action 1: Generalize location data in the public metadata (e.g., to country or state level).

- Action 2: Deposit the precise data in a controlled-access repository like ELIXIR's Data Use Ontology (DUO)-enabled systems or the European Genome-phenome Archive (EGA).

- Action 3: Clearly state in the public metadata the terms for accessing the sensitive data (via a Data Access Agreement).

The Scientist's Toolkit: Research Reagent Solutions for Plant Omics Data Generation

| Item | Function in Data Generation |

|---|---|

| RNeasy Plant Mini Kit (Qiagen) | Extracts high-quality, intact total RNA from a wide range of plant tissues for transcriptomics. |

| DNeasy Plant Pro Kit (Qiagen) | Provides genomic DNA suitable for high-throughput sequencing (e.g., whole-genome resequencing). |

| Phenotyping Imaging Stations (e.g., LemnaTec) | Automated systems for capturing high-resolution, standardized plant images for morphological trait extraction. |

| Plant Ontology (PO) & Trait Ontology (TO) | Controlled vocabularies (ontologies) used to annotate metadata consistently, enabling data integration and search. |

| MIAPPE Checklist | The standardized metadata checklist ensuring all critical experimental context is recorded and shared. |

Diagram 1: FAIR Plant Data Deposition Workflow

Diagram 2: Linking Data Across Repositories

Troubleshooting Guides and FAQs

Q1: When attempting to reuse a dataset for AI model training, I encounter a license that states "NoAI" or "NoMachine-Learning." What does this mean, and what are my options?

A1: A "NoAI" license explicitly prohibits the use of the data for training artificial intelligence systems. This is a specific restriction beyond traditional copyright.

- Actionable Steps:

- Cease Use: Immediately stop using the data for AI training.

- Seek Clarification: Contact the data licensor (e.g., the repository or principal investigator) to understand the scope and rationale. Negotiation may be possible.

- Find Alternative Data: Search for datasets with licenses that permit AI/ML training, such as Creative Commons licenses (CC-BY, CC-BY-SA, CC0) or custom licenses that explicitly grant AI use rights.

- Consider Fair Use/Dealing: Consult with your institution's legal counsel. While sometimes applicable, relying on fair use is a complex legal determination and not a substitute for clear licensing.

Q2: I want to release my plant phenotyping image dataset for broad AI research use. What is the recommended license to ensure FAIR (Findable, Accessible, Interoperable, Reusable) principles, specifically for Reusability (R1.1.)?

A2: To maximize legal Reusability for AI, apply a permissive, standard, and machine-readable license.

- Primary Recommendation: Creative Commons Attribution 4.0 International (CC-BY-4.0). This allows anyone to redistribute, adapt, and build upon the material, including for commercial AI training, as long as appropriate credit is given.

- For Public Domain Dedication: Use CC0. This waives all rights, placing the data in the public domain to the fullest extent possible, removing all legal barriers to reuse.

- Critical Action: Document the license clearly in the dataset's metadata (e.g., in the README file and repository license field). Do not create custom license text without legal consultation.

Q3: How do I properly attribute a dataset used to train my plant disease prediction model, as required by common open licenses?

A3: Proper attribution is a key license condition. Include it in your model's documentation and publications.

- Required Elements (The "TASL" framework):

- Title: Name of the dataset.

- Author: Creator or hosting institution.

- Source: URL or persistent identifier (e.g., DOI).

- License: Type of license (e.g., CC-BY-4.0).

Q4: I am combining multiple plant genomics datasets with different licenses. What are the compatibility rules for creating a derivative training corpus?

A4: License compatibility is a critical governance issue.

- Core Rule: The resulting derivative work must comply with the most restrictive license of the constituent datasets.

- Common Scenario: You cannot combine a dataset under CC-BY-SA (ShareAlike) with one under a strict "No Derivatives" license, as the ShareAlike clause requires the combined work to be licensed under identical terms, which the "No Derivatives" license forbids.

- Protocol for Data Fusion:

- List all source datasets and their specific licenses.

- Identify any "copyleft" (e.g., SA) or restrictive (e.g., ND, NoAI) clauses.

- Create a compatibility matrix. Use a table to track this.

Table: Common License Compatibility for AI Training Data

| License | Allows Commercial AI Training? | Allows Derivative Datasets? | Key Restriction (Incompatible With) |

|---|---|---|---|

| CC0 / Public Domain | Yes | Yes | None. |

| CC-BY-4.0 | Yes | Yes | Must provide attribution. |

| CC-BY-SA-4.0 | Yes | Yes | Derivative dataset must be licensed under CC-BY-SA. |

| Custom, "Academic Use Only" | No | Often No | Commercial licenses. |

| Custom, "NoAI" / "NoML" | No | No | Any AI training purpose. |

| ODC-BY | Yes | Yes | Similar to CC-BY. |

| ODbL | Yes | Yes | Similar to CC-BY-SA (ShareAlike). |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for Licensing AI-Ready Plant Science Data

| Item / Resource | Function & Relevance to Licensing |

|---|---|

| SPDX License List | A standardized list of short identifiers for common software and data licenses. Use the SPDX ID (e.g., CC-BY-4.0) in metadata to make licenses machine-readable. |

| FAIRsharing.org | A registry that links data standards, databases, and policies. Useful for discovering domain-specific repositories with clear licensing norms. |

| Data Use Ontology (DUO) | A set of standardized terms (e.g., DUO:0000007 "disease-specific research") to make data use conditions machine-actionable, complementing legal licenses. |

| Creative Commons License Chooser | An interactive tool to select the appropriate CC license for your data. |

| Institutional Legal Counsel | Essential for reviewing custom Data Transfer Agreements (DTAs) and navigating complex copyright or compatibility issues. |

| README File Template | A structured text file (e.g., README.md) to document the dataset, its provenance, and its license in human-readable form. |

Experimental Protocol: Implementing a License Compliance Check for a Training Dataset

Objective: To systematically verify that all data sources in a composite plant science dataset are legally permissible for use in AI model training and to document the compliance trail.

Materials: List of dataset sources/URLs, spreadsheet software, access to license information (repository pages, metadata).

Methodology:

- Source Inventory: Create a spreadsheet. For each data source, record: Source Name, URL/DOI, Data Type (e.g., imagery, sequences), and Original License/Terms of Use.

- License Retrieval & Interpretation: Visit the official source for each dataset. Locate the license (often in "Terms of Use," "License," or a

LICENSE.txtfile). Record the exact license name and version. - AI/ML Permissibility Check: Analyze the license text for key clauses: "non-commercial," "no-derivatives," "share-alike," and any explicit mention of "machine learning," "AI," "text-and-data mining," or "computation."

- Compatibility Assessment: If creating a derivative corpus, assess the most restrictive license that will govern the combined output (see FAQ Q4).

- Attribution Planning: For each permissive source, document the required TASL (Title, Author, Source, License) information for future attribution.

- Documentation: Generate a final compliance report summarizing findings, which becomes part of the model's accountability documentation.

Diagram: AI Data Licensing Compliance Workflow

Technical Support Center

Troubleshooting Guide: FAIR Data Curation

Issue 1: Dataset is not machine-readable.

- Symptoms: Automated scripts fail to parse data files. Metadata is embedded in unstructured PDFs or Word documents.

- Solution: Convert all primary data (e.g., sensor readings, images) to open, structured formats (CSV, JSON, HDF5). Extract metadata into a linked, standards-compliant format (JSON-LD, RDF).

- Protocol: Use tools like

pandasin Python to convert Excel files to CSV. Implement a script to extract metadata headers from image files using thePILorexifreadlibraries and output to a structured JSON file.

Issue 2: Persistent identifier (PID) assignment is confusing.

- Symptoms: Internal database IDs are used, making data unreferenceable outside the local system.

- Solution: Register key digital objects (dataset, documentation) with a globally recognized repository that issues PIDs (e.g., DataCite DOI, ePIC PID).

- Protocol:

- Package your dataset (data + core metadata) into a ZIP file.

- Upload to a trusted repository (e.g., Zenodo, e!DAL-PGP).

- Use the repository interface to mint a DOI, which becomes the dataset's permanent citation link.

Issue 3: Standardized metadata vocabulary is missing.

- Symptoms: Column headers or attribute names are lab-specific (e.g., "plantheightcm" vs. "height").

- Solution: Map your metadata terms to public, controlled vocabularies or ontologies.

- Protocol:

- List all key metadata variables (e.g., species, trait, unit).

- Search the EMBL-EBI Ontology Lookup Service or the Planteome portal for matching terms.

- Replace free-text values with ontology term IDs (e.g., use

PO:0007184for "hypocotyl" from the Plant Ontology).

Issue 4: Data access is restricted by unclear licensing.

- Symptoms: Users are unsure how they can legally reuse the data for ML training.

- Solution: Attach a clear, permissive usage license (e.g., CC-BY 4.0, CC0) to the dataset at the point of publication.

- Protocol: Include a

LICENSE.txtfile in the data package root. Clearly state the chosen license in the repository metadata fields during deposition.

Frequently Asked Questions (FAQs)

Q2: How do I make image-based phenotyping data Interoperable for ML?

A: Store images in a standard format (TIFF, PNG). Provide a companion CSV file that links each image filename to its experimental metadata using ontology terms. Include precise details on imaging setup (camera specs, lighting, distance) in a readme file using standardized vocabularies.

Q3: What tools can help automate the FAIRification process?

A: Use data curational pipelines like Fairly or DataLad. For plant-specific metadata, use tools like CropStore or the ISA (Investigation-Study-Assay) framework configured with plant ontologies.

Q4: How can I ensure my FAIRified dataset is Reusable? A: Provide detailed provenance: the experimental protocols, the data processing scripts (e.g., on GitHub), and a clear data dictionary defining all variables. Use a community-accepted file format and a non-restrictive license.

Table 1: Comparison of Metadata Standards for Plant Phenotyping

| Standard/Ontology | Scope | Key Features | Relevant Use Case |

|---|---|---|---|

| MIAPPE | Minimum Information About Plant Phenotyping Experiments | Defines core metadata fields for plant studies. | Mandatory for submission to many plant archives (e.g., EUDAT). |

| Crop Ontology | Trait and phenotype descriptors for crops. | Provides standardized trait names and measurement methods. | Annotating measured variables (e.g., "leaf area"). |

| Plant Ontology | Plant structures and growth stages. | Describes anatomical entities and development stages. | Specifying the plant part measured (e.g., "flower bud"). |

| ISA-Tab | General-purpose experimental metadata framework. | Structures data into Investigation, Study, Assay layers. | Describing a complex multi-omics phenotyping workflow. |

Table 2: Example FAIR Metrics for a Published Phenotyping Dataset

| FAIR Principle | Metric | Target Score | Example Implementation |

|---|---|---|---|

| Findable | Presence of a DOI | 100% | DOI: 10.5281/zenodo.1234567 |

| Accessible | Data accessible via standard protocol (HTTPS) | 100% | Data downloadable via Zenodo HTTPS link. |

| Interoperable | Use of ≥ 5 ontology terms | >80% | Using terms from PO, CO, and ENVO. |

| Reusable | Presence of a clear license | 100% | Data licensed under CC-BY 4.0. |

Experimental Protocols

Protocol 1: Generating a FAIR-Compliant Metadata File (ISA-Tab Format)

- Define Investigation: Create an

investigation.txtfile describing the overarching project, title, and contributors. - Define Study: Create a

study.txtfile detailing the specific plant experiment (species, growth conditions, design). - Define Assay: Create an

assay.txtfile for the high-throughput phenotyping run. Link each raw data file (e.g.,plant_001_image.png) to its sample and the measurement protocol. - Map to Ontologies: In the study and assay files, replace free-text descriptions with ontology IDs where possible (e.g., growth condition

"controlled environment"->EO:0007363). - Package: Store the ISA-Tab files (

i_*.txt,s_*.txt,a_*.txt) in the root directory of your dataset.

Protocol 2: Preparing RGB Image Data for ML Reuse

- Standardization: Resize all images to a uniform resolution (e.g., 512x512 pixels). Convert all to PNG format to avoid lossy compression.

- Anonymization: Remove any internal, non-standard filename tags. Use a consistent naming schema:

{StudyID}_{PlantID}_{Timestamp}_{View}.png. - Annotation File: Create a

annotations.csvfile with columns:filename,plant_id,treatment,phenotype_1,phenotype_2, etc. Ensure phenotypic data is linked to a measurement unit ontology. - Provenance Log: Include a

processing_log.mddocumenting the software versions (e.g., OpenCV v4.8.0) and exact commands used for steps 1-3.

Visualizations

FAIRification Workflow for Plant Phenotyping Data

Logical Relationships in a FAIR Dataset Package

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for High-Throughput Phenotyping

| Item / Solution | Function in FAIRification Context | Example Product / Standard |

|---|---|---|

| Controlled Vocabulary Services | Provide standard terms for metadata annotation, ensuring Interoperability. | Planteome Portal, EMBL-EBI Ontology Lookup Service. |

| Data Repository (with DOI) | Provides persistent storage, a unique identifier (DOI), and public access. | Zenodo, e!DAL-PGP, CyVerse Data Commons. |

| Metadata Schema Tools | Frameworks to structure and validate experimental metadata. | ISA framework (ISA-Tab), MIAPPE checklist. |

| Data Containerization Software | Packages data, code, and environment to ensure reproducibility (Reusability). | Docker, Singularity. |

| Scripting Language & Libraries | Automate data conversion, metadata extraction, and quality checks. | Python (Pandas, NumPy), R (tidyverse). |

| Open Licenses | Define legal terms for reuse, crucial for Reusability. | Creative Commons (CC-BY, CC0), Open Data Commons. |

Overcoming Common Pitfalls in FAIR Data Implementation for Plant AI Projects

Welcome to the Technical Support Center for FAIR Data Conversion. This guide provides targeted solutions for common obstacles encountered when applying FAIR principles to legacy plant science datasets for AI research.

FAQs & Troubleshooting Guides

Q1: How do I start FAIRifying a legacy dataset with no existing metadata? A: Implement a minimal metadata extraction protocol. First, perform a file inventory audit. Use automated scripts to extract embedded metadata from file headers (e.g., from HPLC or sequencer output files). For unstructured data like lab notebooks, use a controlled vocabulary (e.g., Plant Ontology terms) to manually annotate key experimental conditions in a structured template.

Q2: My legacy data files have inconsistent naming conventions. How can I standardize them for computational access? A: Deploy a batch renaming pipeline using a rule-based script. The core methodology is:

- Audit: Generate a manifest of all files and their current names.

- Rule Definition: Create a naming convention:

Project_Species_Trait_Assay_Date_ResearcherID.ext. Define allowed values for each field from a controlled list. - Mapping & Execution: Create a lookup table mapping old names to new names based on rules, then execute the rename programmatically, preserving a log of changes.

Q3: How can I make legacy image data (e.g., plant phenotyping photos) Findable and Interoperable?

A: Attach critical spatial and phenotypic metadata directly to image files as machine-readable tags. Use the EXIF or XMP standards to embed key-value pairs such as Species: Solanum lycopersicum, Treatment: Drought Stress, Camera Settings: f/5.6, 1/250s. This ensures metadata travels with the file.

Q4: I have quantitative trait data in PDF tables. What is the most efficient way to extract it for Reuse? A: Use a hybrid extraction workflow:

- Tool-Based Extraction: Use a PDF table extractor (e.g., Tabula, Camelot) to pull data into a CSV.

- Validation & Manual Curation: Cross-check extracted values against the source PDF for accuracy. Document any manual corrections in a README file.

- Semantic Annotation: Add column headers that map to known variables (e.g.,

PH->Plant_Height_cm) and link to a unit ontology (e.g., UO:0000015 for 'centimeter').

Q5: How do I assign persistent identifiers (PIDs) to legacy samples that only have lab-internal codes? A: Register a new collection in a public or institutional repository (e.g., BioSamples, EUDAT). Prepare a metadata spreadsheet mapping your internal codes to standardized fields (sample type, collection date, geographic location). Upon submission, the repository will issue globally unique PIDs (e.g., SAMEAXXXXXXX) which you must then link back to your data files.

Table 1: Results from a legacy plant phenomics dataset audit, highlighting FAIR compliance gaps.

| Data Category | Volume (Files) | Formats Found | % With Metadata File | Avg. File Name Inconsistencies |

|---|---|---|---|---|

| Genotype Data | 1,200 | .xlsx, .csv, .txt | 65% | 2.1 per dataset |

| Phenotype Images | 45,000 | .jpg, .tiff, .png | 15% | 4.5 per batch |

| Environmental Logs | 320 | .pdf, .docx, .csv | 40% | 1.8 per log |

| Spectroscopy Data | 850 | .asc, .spc, .csv | 90% | 0.5 per dataset |

Experimental Protocol: Metadata Mining from Legacy Files

Objective: To systematically extract and structure metadata from legacy plant experiment files for FAIRification.

Materials: Legacy data storage, text parsing tools (e.g., grep, Python), a metadata schema template (e.g., MIAPPE-compliant), a controlled vocabulary (e.g., Plant Ontology, Trait Ontology).

Methodology:

- Inventory: Catalog all files, recording path, format, size, and last modified date.